Adversarial examples

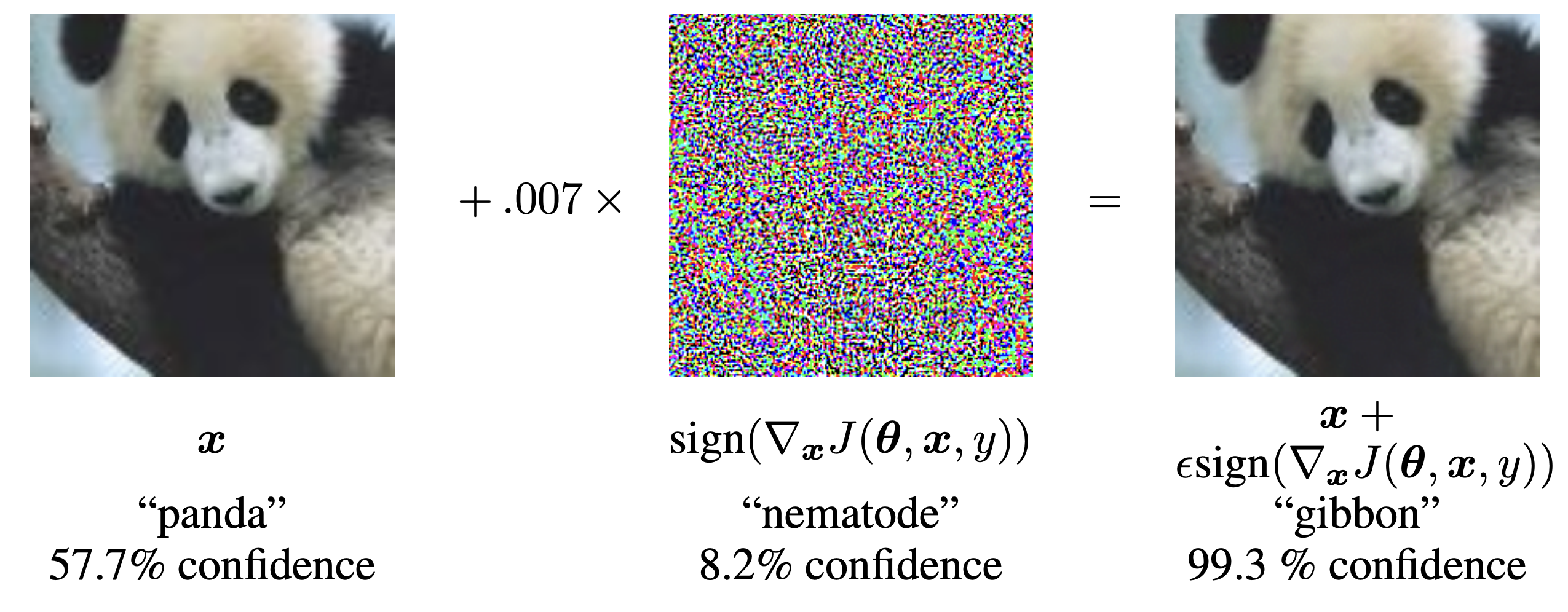

Adversarial examples initially seemed an oddity. Szegedy et al. [1] demonstrated that a minuscule perturbation, meaningless to human eyes, could confidently flip a neural net's prediction. My first instinct on reading it was to blame weird nonlinearities or overfitting. It turned out to be neither. Goodfellow et al. [2] gave a much simpler explanation. A linear model with weight $w \in \mathbb{R}^d$ will have logit shift $w^\top \delta$ under perturbation $\delta$, and the worst-case $\ell_\infty$ behavior inside of $\|\delta\|_\infty \le \epsilon$ is $\epsilon \|w\|_1$, growing like $\epsilon\,d$ for dense weights, a tiny per-pixel perturbation accumulated across a high-dimensional input.

In a thousand-dimensional input space, even an imperceptible $\delta$ can produce a logit shift that flips the prediction. Adversarial perturbations are then not a symptom of nonlinear extrema; they are a generic property of high-dimensional linear decision rules. FGSM is then a one-step linearized attack on the inner maximum $\max_{\|\delta\|_\infty \le \epsilon} L(\theta, x+\delta, y)$. The fact that one step works at all is the diagnostic: the model is sensitive to a direction the data distribution does not mark as human-meaningful. Carlini and Wagner later showed that more carefully tuned attack objectives produce much smaller-norm perturbations than FGSM, breaking many defenses whose only evaluation had been against single-step attacks.

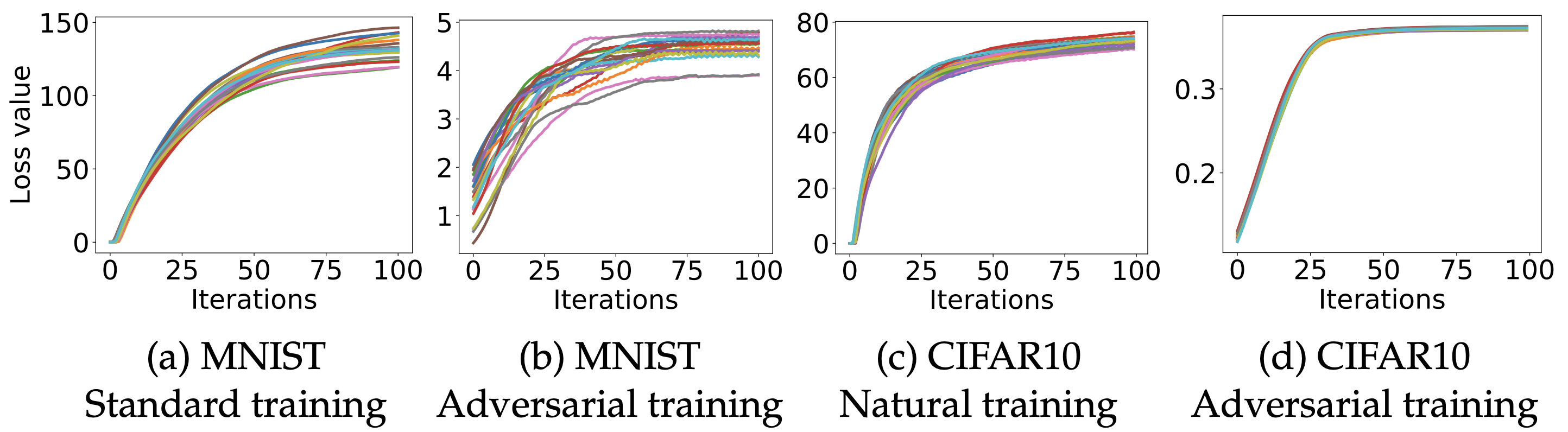

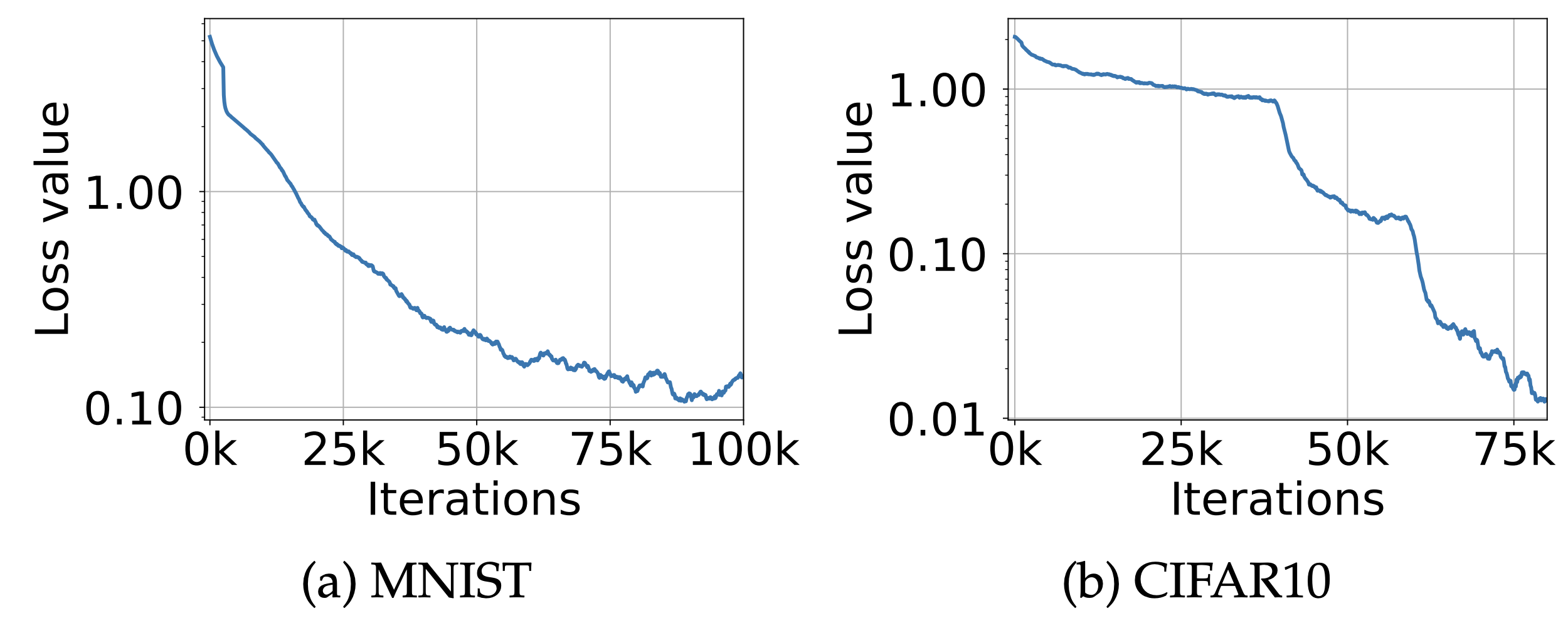

Madry et al. wrote down the saddle-point formulation: define a defense by the worst-case inner-max loss it can resist within a fixed threat model, and treat any defense that fails a stronger attack inside that threat model as broken. The split separates two questions that were previously tangled together: the inner problem defines what the attacker can do, and the outer problem defines what the model has to optimize against. Projected gradient descent then becomes both the canonical attack and the canonical training procedure. It is not perfect, but it made robustness measurable enough that defenses could be compared honestly. Many proposed defenses then turned out not to be robust; they just break weak attacks. Athalye, Carlini, and Wagner cataloged the failure modes under one heading - obfuscated gradients. Stochastic preprocessing, non-differentiable transforms, exploding or vanishing gradients, and gradient shattering each produce attacks that fail without producing classifiers that survive a stronger attack.

They broke six of the nine ICLR-2018 defenses completely and a seventh partially, just by replacing the attack with a stronger one inside the same threat model. AutoAttack later turned that lesson into a parameter-free ensemble: a single attack you can run against a defense without per-defense tuning, which exposes inflated robustness numbers automatically. The other branch of progress is certified rather than empirical robustness. Cohen, Rosenfeld, and Kolter produce randomized-smoothing certificates: convolve the classifier with isotropic Gaussian noise of variance $\sigma^2$ and the smoothed classifier $g(x) = \arg\max_c \mathbb{P}_{\eta \sim \mathcal{N}(0,\sigma^2 I)}[f(x+\eta) = c]$ is provably robust within an $\ell_2$ ball of radius $\sigma\,\Phi^{-1}(p_A)$, where $p_A$ is the lower confidence bound on the top-class probability.

The definition of "adversarial examples" I prefer these days is "Adversarial examples are inputs to machine learning models that an attacker has intentionally designed to cause the model to make a mistake" https://t.co/GiXiQBCp5L

— Ian Goodfellow (@goodfellow_ian) April 12, 2018

The certificate is provable. The cost is that the radius is small in practice and only natural in $\ell_2$. For a less paper-indexed entry point, the Gradient Science adversarial robustness page is still one of the better maps of attacks, defenses, and the evaluation traps. RobustBench is the practical scoreboard, once the question becomes: does this defense survive standard attacks?

The accuracy trade-off

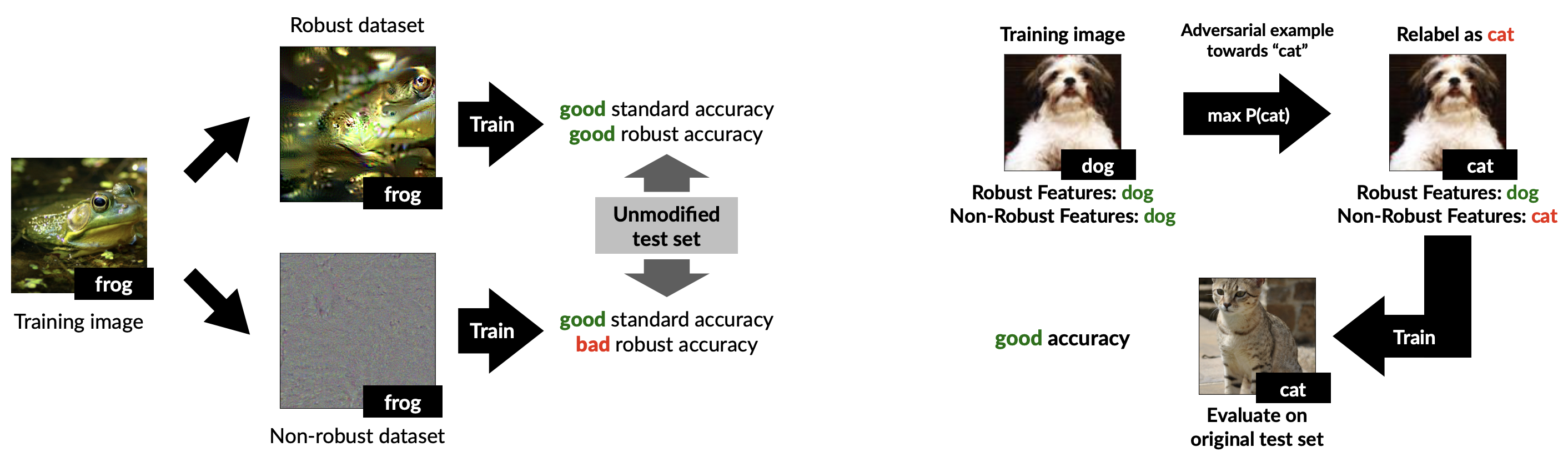

Tsipras et al. [4] made the uncomfortable point that robustness can conflict with standard accuracy. In their construction, the standard classifier uses weak but highly predictive features that are not robust. The robust classifier has to ignore them and therefore loses ordinary accuracy. The empirical version of this trade-off is more complicated, but the conceptual point survived: robustness is not just accuracy with additional caution.

A robust classifier may have to learn different features altogether. Ilyas et al. [5] put it bluntly: adversarial examples are not bugs, they are features. Their claim was not that every attack direction is semantically relevant to humans. It was that standard datasets contain predictive signal that models can use and that humans do not recognize as robust evidence. Adversarial perturbations take advantage of those signals. Robust training suppresses them. Schmidt et al. [6] sharpened the price metric in statistical terms: the sample complexity of robust learning can be polynomially bigger than the sample complexity of standard learning, an information-theoretic gap that holds irrespective of the training algorithm or the model family. In their Gaussian-mixture model, standard generalization needs only constant sample complexity while robust generalization at $\ell_\infty$ radius $\epsilon$ requires a polynomial-in-$d$ number of samples.

The gap comes from the structure of the learning problem, not the algorithm. Robustness is paying for invariance, and invariance costs in terms of sample complexity. Engstrom et al. then ran the experiment in the other direction: representations from robust classifiers transfer better than standard ones on a range of downstream tasks, look more semantically aligned in feature visualization, and yield gradients that resemble human-perceptible objects. Robustness, in this reading, is also a representation-learning prior. Whether the prior helps or hurts depends on the downstream task, but it is not free of structure.

The adversarial-examples literature forced a distinction between predictive validity and human-aligned validity. A feature can be statistically real, useful for test accuracy, and still unacceptable under a robustness constraint. That is a deeper issue than security: the supervised-learning objective does not fully specify the invariances I care about. To the extent that human perception is itself a strong inductive bias, models that do more representation learning end up closer to my geometry; robust models produce gradients and saliency maps that line up with what a person would identify as the object. In medical imaging, robotics, or safety-critical perception that trade-off is worth the cost. In low-stakes classification it often is not.

The "non-robust feature" label renames an older statistical fact. Predictive validity in distribution is not causal structure, and a model that maximizes the former will exploit signals the latter does not endorse. Adversarial training folds a robustness constraint into the objective. The cleaner long-term fix is on the data side: collect or augment so that the equivalence classes the human cares about are the equivalence classes the dataset enforces.

Further reading

- F. Croce and M. Hein. Reliable evaluation of adversarial robustness with an ensemble of diverse parameter-free attacks. arxiv 2003.01690, 2020

- N. Carlini and D. Wagner. Towards evaluating the robustness of neural networks. arxiv 1608.04644, 2016

- A. Athalye, N. Carlini, and D. Wagner. Obfuscated gradients give a false sense of security: circumventing defenses to adversarial examples. arxiv 1802.00420, 2018

- J. M. Cohen, E. Rosenfeld, and J. Z. Kolter. Certified adversarial robustness via randomized smoothing. arxiv 1902.02918, 2019

- L. Engstrom, A. Ilyas, S. Santurkar, D. Tsipras, B. Tran, and A. Madry. Adversarial robustness as a prior for learned representations. arxiv 1906.00945, 2019

References

- [1] C. Szegedy, W. Zaremba, I. Sutskever, J. Bruna, D. Erhan, I. Goodfellow, and R. Fergus. Intriguing properties of neural networks. arxiv 1312.6199, 2013.

- [2] I. J. Goodfellow, J. Shlens, and C. Szegedy. Explaining and harnessing adversarial examples. arxiv 1412.6572, 2014.

- [3] A. Madry, A. Makelov, L. Schmidt, D. Tsipras, and A. Vladu. Towards deep learning models resistant to adversarial attacks. arxiv 1706.06083, 2017.

- [4] D. Tsipras, S. Santurkar, L. Engstrom, A. Turner, and A. Madry. Robustness may be at odds with accuracy. arxiv 1805.12152, 2018.

- [5] A. Ilyas, S. Santurkar, D. Tsipras, L. Engstrom, B. Tran, and A. Madry. Adversarial examples are not bugs, they are features. arxiv 1905.02175, 2019.

- [6] L. Schmidt, S. Santurkar, D. Tsipras, K. Talwar, and A. Madry. Adversarially robust generalization requires more data. arxiv 1804.11285, 2018.