Double descent

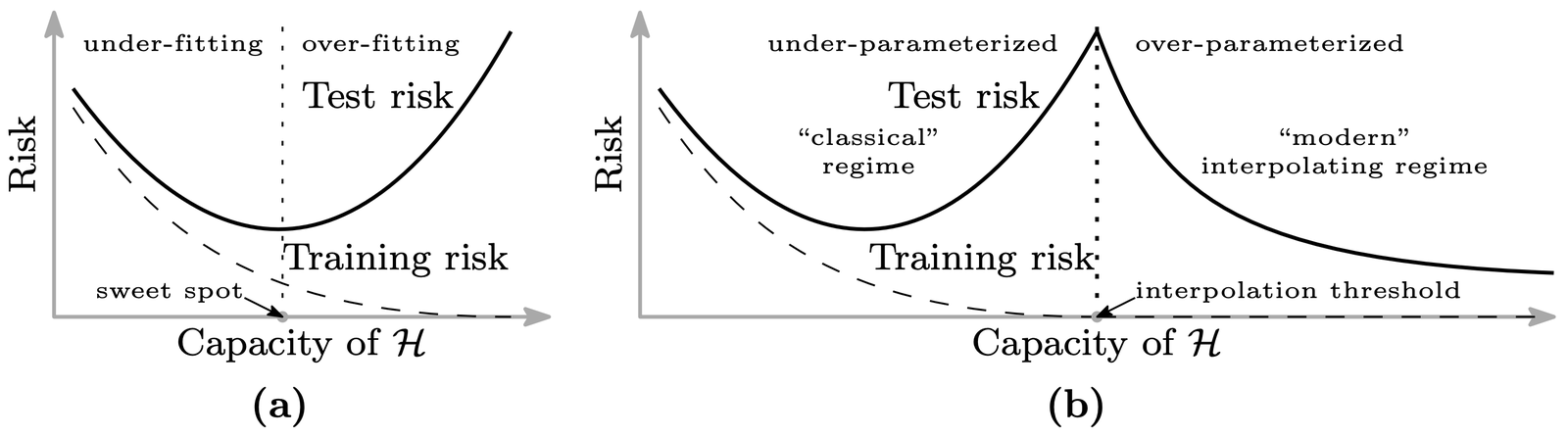

The classical U becomes a W with a second descent in the overparameterized ($p \gt n$) regime and that second descent often goes below the first minimum.

Nakkiran et al.

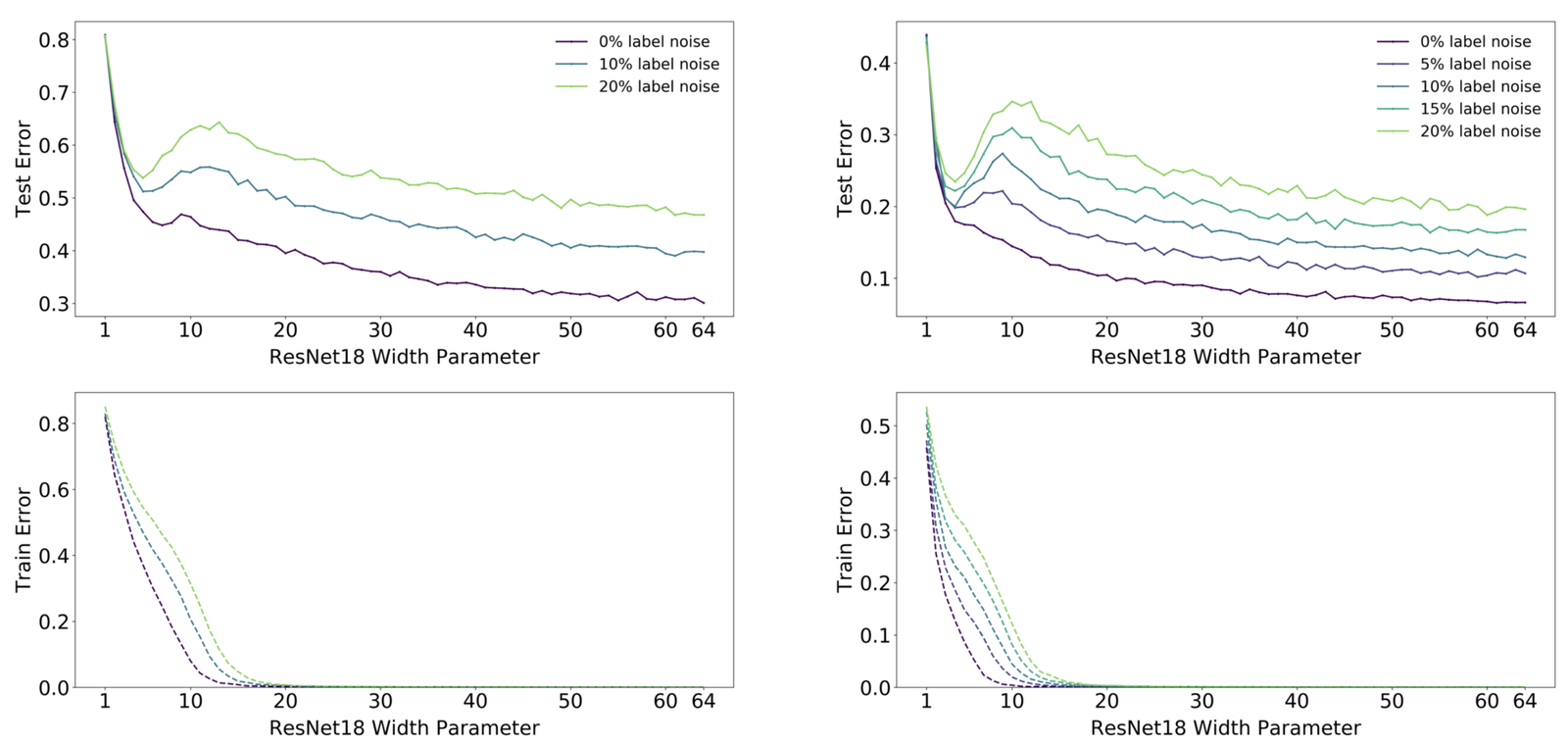

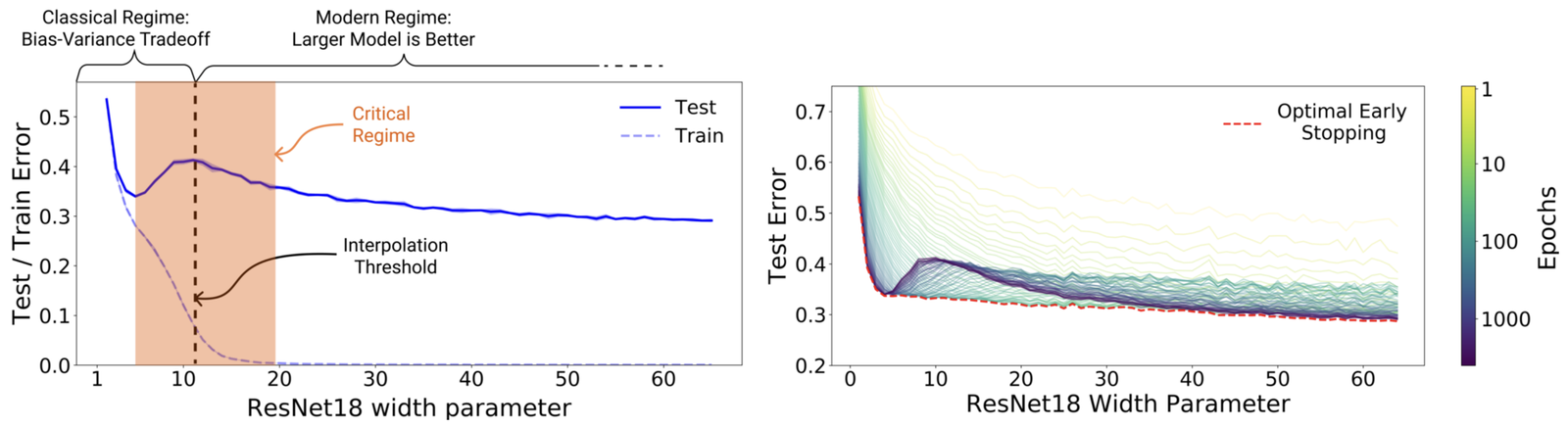

Nakkiran et al. [2] made the picture concrete by showing that the W-shape appears in three different axes: model size $p$, training time, and dataset size $n$. Model-wise double descent varies the width $k$ of a ResNet $f_\theta$; epoch-wise double descent varies the number of training steps; sample-wise double descent varies dataset size with everything else held fixed. The shape is the same each time: a test-error peak near the interpolation threshold, then a descent once you push past it.

Epoch-wise is the most surprising of the three. Within one run, test error gets worse before it gets better. The worst test error sits roughly at the iteration where training loss first hits zero; train past it and the test error drops again.

Label noise

The sharpest versions of the double descent peak in these papers come with label noise. Nakkiran's headline plots use ten to twenty percent corrupted labels. Without label noise, the peak is much weaker and sometimes absent. Label noise inflates the variance contribution of the model at the interpolation threshold because the model is being asked to memorize random labels at exactly the capacity where memorization is possible but not easy.

Past the threshold, extra capacity absorbs the noise into higher-frequency components without disturbing the underlying signal. This is the hinge that connects the toy phenomenon to actual deep learning. Belkin's linear-regression result holds at all noise levels but the gap is small without noise; Nakkiran's dramatic curves require label noise to be visible. At modern language-model scale, with clean labels and large models, test loss is close to monotone in parameter count and scaling-law papers fit clean $L \propto C^{-\alpha}$ decay with no visible second peak. The effect is real and the classical bias-variance picture is wrong in the overparameterized regime, but the large peak that gives double descent its name is specific to the label-noise case.

Boaz Barak's Windows on Theory post makes a version of this argument: the interesting part of double descent is to the right of the peak, not the peak itself. OpenAI's Deep Double Descent post took Nakkiran to a much wider audience and posed the sharper question: given this effect, what kind of complexity control (if any) actually predicts generalization? Google Research's "A new lens on understanding generalization in deep learning" recast double descent in terms of an effective-capacity measure that tracks the empirical curves better than parameter count does. Misha Belkin's Simons Institute talks are the video account I keep sending people who want to see how the picture has changed since 2018.

So, "double descent" is happening b/c DF isn't really the right quantity for the the x-axis: like, the fact that we are choosing the minimum norm least squares fit actually means that the spline with 36 DF is **less** flexible than the spline with 20 DF.

— Daniela Witten (@daniela_witten) August 9, 2020

Crazy, huh?

19/

I'm closer to Barak's reading than to what filtered down to practitioner intros.

After the label-noise caveat, two results from this line of work hold up. Classical capacity measures like VC dimension do not extend cleanly to the overparameterized regime and cannot be expected to predict generalization there. And overparameterized networks with astronomically large VC dimensions can sit well below smaller networks in test error on the same task — which implies the loss landscape is doing the selection: from a vast pool of interpolating solutions, the optimizer is picking a small subset that generalizes.

What practitioners did with this was simpler than what the theory suggested: once you are in the overparameterized regime and you have compute to spend, bigger is usually better. The second descent has no obvious endpoint, which is why Kaplan-style scaling laws can fit clean power-law decay in compute — they are sitting entirely on the right-hand, log-log-linear side of the W-curve. The dramatic 2019 reading of double descent was that the bias-variance tradeoff is fiction and overfitting no longer exists. The second half of that is trivially untrue (overfitting is easy to produce in any small-data regime). The first half is more delicate: above the interpolation threshold, with implicit min-norm regularization ($\theta^\star = \arg\min_{f_\theta(X)=y} \|\theta\|$), larger models tend to generalize better rather than worse. That is a statement about a regime, not a law.

Another claim that does not survive the empirical record is that the peak is always exactly at the interpolation threshold. In practice, the exact location of the peak in Nakkiran's ResNet experiments depends on the effective number of parameters under whatever implicit regularization is in use, not on the total parameter count. The peak does not occur precisely at the width at which training error ($L_{\text{train}}$) first reaches zero; it sits slightly past that point, where the network can memorize noisy labels without disrupting the underlying signal.

Further reading

- J. Kaplan, S. McCandlish, T. Henighan, T. B. Brown, B. Chess, R. Child, S. Gray, A. Radford, J. Wu, and D. Amodei. Scaling laws for neural language models. arxiv 2001.08361, 2020

- S. d'Ascoli, M. Refinetti, G. Biroli, and F. Krzakala. Double trouble in double descent: Bias and variance(s) in the lazy regime. arxiv 2003.01054, 2020

- P. Nakkiran, P. Venkat, S. Kakade, and T. Ma. Optimal regularization can mitigate double descent. arxiv 2003.01897, 2020

- G. Yang, E. J. Hu, I. Babuschkin, S. Sidor, X. Liu, D. Farhi, N. Ryder, J. Pachocki, W. Chen, and J. Gao. Tensor Programs V: tuning large neural networks via zero-shot hyperparameter transfer. arxiv 2203.03466, 2022

- B. Adlam and J. Pennington. Understanding double descent requires a fine-grained bias-variance decomposition. NeurIPS, 2020

- R. Schaeffer et al. Double descent demystified. ICLR Blogposts, 2024

References

- [1] M. Belkin, D. Hsu, S. Ma, and S. Mandal. Reconciling modern machine learning practice and the bias-variance trade-off. arxiv 1812.11118, 2018.

- [2] P. Nakkiran, G. Kaplun, Y. Bansal, T. Yang, B. Barak, and I. Sutskever. Deep double descent: Where bigger models and more data hurt. arxiv 1912.02292, 2019.

- [3] T. Hastie, A. Montanari, S. Rosset, and R. J. Tibshirani. Surprises in high-dimensional ridgeless least squares interpolation. arxiv 1903.08560, 2019.