The edge of stability

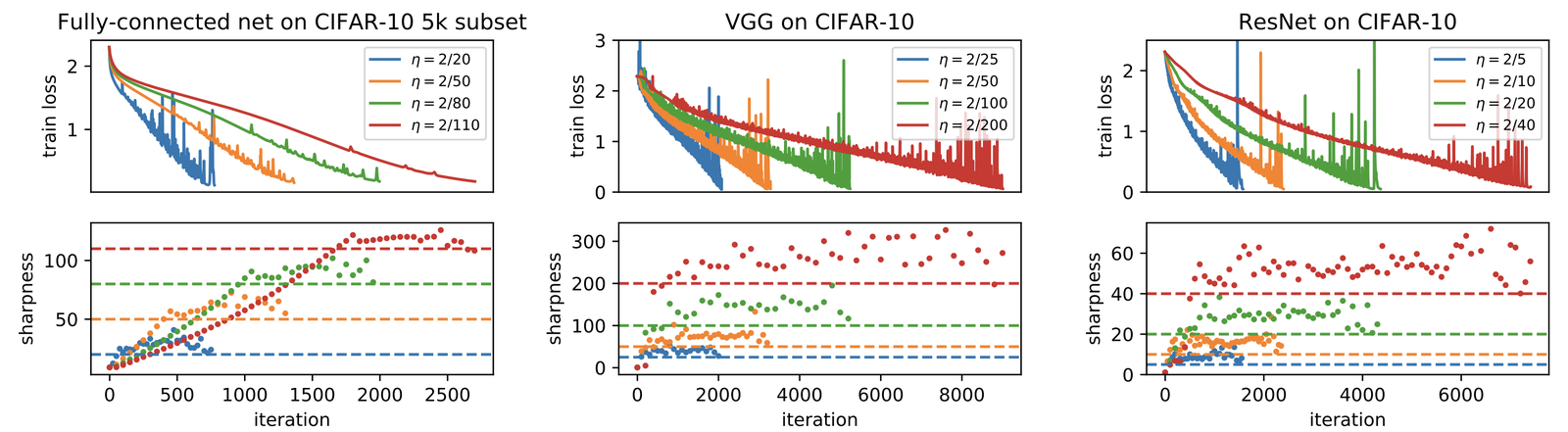

Cohen et al. [1] observed that gradient descent on neural networks spends most of training in a regime where the top Hessian eigenvalue $\lambda_{\max}$ is above the classical stability threshold $2/\eta$. The step is formally unstable ($\eta \lambda_{\max} > 2$), but the loss does not diverge: the trajectory oscillates along the unstable direction while continuing to make progress on the rest. They called this the edge of stability, and the point is that it is not an edge case but the ordinary regime of neural-network training.

Progressive sharpening

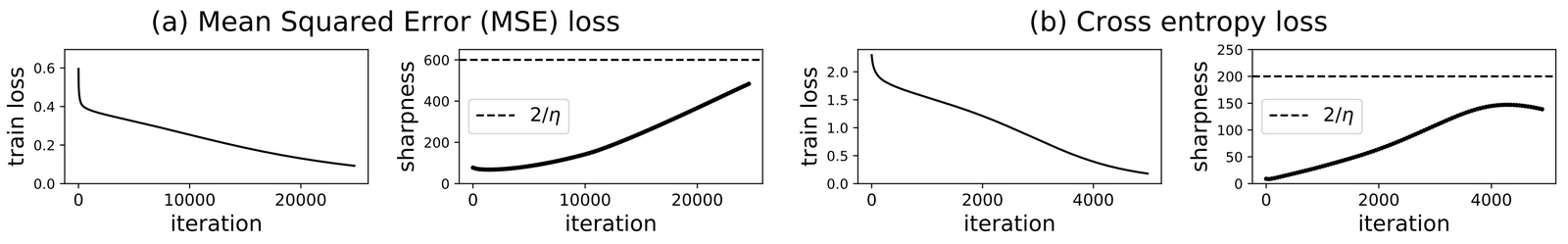

The first phase Cohen identified is progressive sharpening. From random initialization, gradient descent reliably drives the sharpness ($\lambda_{\max}(H)$) of the loss landscape upward during training.

That direction is already counterintuitive. Classical optimization theory says you want to avoid sharp regions, since each step there costs more. Neural-network training does the opposite: it walks into sharper regions until the classical step size is no longer stable. Progressive sharpening itself is not well understood from first principles. Damian et al. [2] give a self-stabilization argument for what happens after the threshold is reached, and Ahn et al. [4] build on it, but neither predicts the sharpening from initialization. The observational picture is clean; the theoretical one is not.

Above the threshold

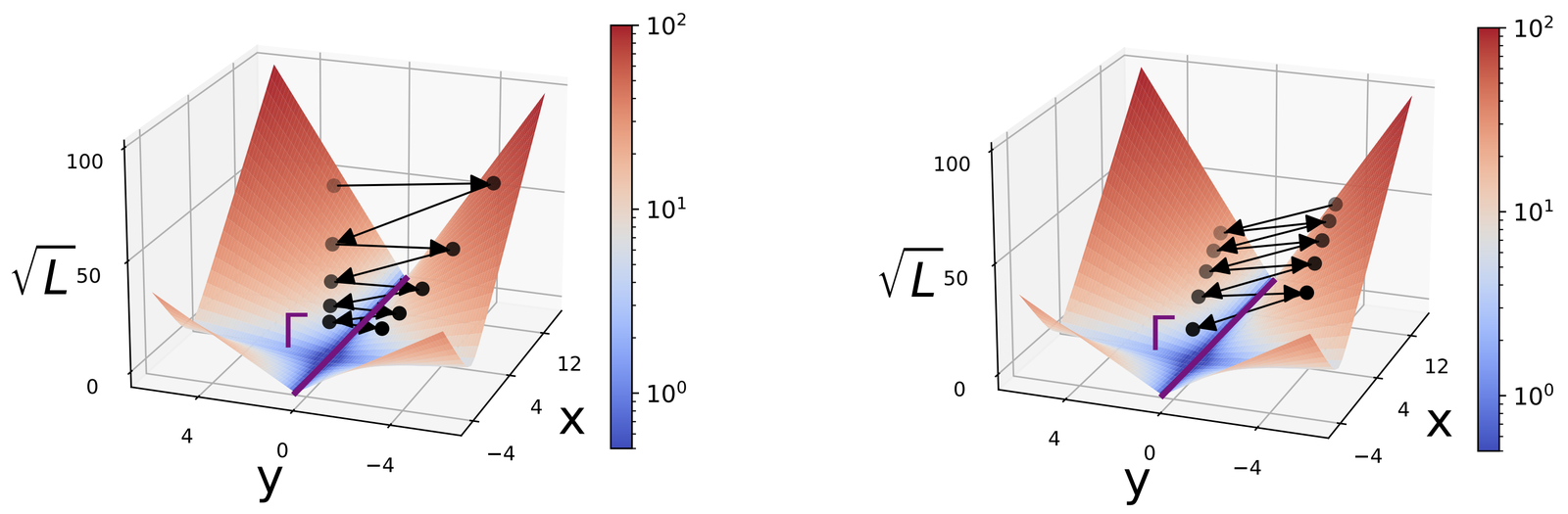

Once sharpness crosses $2/\eta$, the textbook prediction is divergence; instead the trajectory oscillates along the top eigendirection of the Hessian. The component of the iterate along that direction swings back and forth, and the loss still falls because optimization keeps making progress on the better-conditioned directions. The net result is a trajectory that reduces loss while sitting in a region of the landscape that classical theory says it should not occupy. The first theoretical account of why this need not be a pathology comes from Arora et al. [3]. Their analysis works on a smoothed version of the loss, where the Hessian is treated as locally fixed, and in effect tracks the trajectory you would follow from a given starting point under that fixed Hessian.

The mechanism is that the oscillation $\Delta \theta_t$ across the unstable direction averages to zero, so the effective dynamics is slower and looks like gradient descent on a loss with the steepest direction clipped. The account is formal enough to be checked against real training runs, and in most common settings it holds up.

And flat minima

The earlier flat-minima story started with Hochreiter and Schmidhuber and continued with Keskar et al. [7] and the later sharpness aware minimization literature. Broadly, the flat-minima story ran as follows: SGD with a small batch size produces gradient estimates with some noise $\xi$.

That noise looks like a random walk and tends to leave sharp minima more often than flat ones, so SGD ends up biased toward flat minima, and that bias was meant to be why neural networks generalize. Edge-of-stability does not contradict the story, but it reshapes it. The learning rate itself caps how sharp a reachable minimum can be: anything with $\lambda_{\max} > 2/\eta$ is unstable for GD, so the trajectory cannot stay there. Gradient descent finds flat minima not because it has noise, but because sharp minima are unstable fixed points under its own dynamics. The original explanation identified the phenomenon and pinned it to the wrong cause.

The reframing lives mostly outside the papers themselves. Off Convex has a few posts on implicit bias, trajectory analysis, and why the classical descent lemma is genuinely misleading for neural networks instead of merely approximate. Ben Recht's ArgMin is the complementary skeptical take for once you have left convex optimization theory $\mu I \preceq \nabla^2 L \preceq L\,I$. Clare Lyle's tutorial walks through the $2/\eta$ arithmetic and ties the phenomenon to warmup (rising $\eta(t)$) and catapult (loss spike then decay) in one frame.

Andreyev and Beneventano (arxiv 2412.20553) extended the story to the mini-batch setting Cohen did not analyze, introducing an "edge of stochastic stability" where the quantity that pins at $2/\eta$ is the expected directional curvature of mini-batch Hessians, not the full-Hessian's top eigenvalue.

A few previously folklore-level phenomena become intelligible from this picture. Warmup schedules $\eta(t)$, which start small and increase $\eta$ over several thousand iterations, let the network settle into edge-of-stability before $\eta$ reaches its final value; without warmup, the early transient at the full $\eta$ would hit a too-sharp region and diverge. Decreasing-$\eta$ schedules at the end of training raise $2/\eta$, so the trajectory can fine-tune in sharper local minima inside the broader flat region already reached, which empirically pushes training loss down further.

Both schedules had been used empirically for years before any principled account existed. Lewkowycz et al.'s catapult mechanism [5], where an initial loss spike sometimes precedes a better final solution, is the same dynamics at a larger scale: a large learning rate pushes the trajectory through a briefly very sharp region, the loss spikes, and the trajectory then settles into a different basin from the one it would have reached at a smaller step size.

The generalization gap is still open

Edge-of-stability gives a clean account of why SGD ends up in flat minima, but it says nothing about why flat minima generalize. Those are distinct questions, and the second one is still open.

Dinh et al. [6] showed that the Hessian-based notion of sharpness is not reparameterization invariant, so sharpness in that form cannot directly control generalization.

Even with full-batch gradients, DL optimizers defy classical optimization theory, as they operate at the *edge of stability.*

— Jeremy Cohen (@deepcohen) October 1, 2025

With @alex_damian_, we introduce "central flows": a theoretical tool to analyze these dynamics that makes accurate quantitative predictions on real NNs. pic.twitter.com/pvvfwoQcOy

Further reading

- deep dive into the edge of stability

- J. Frankle, G. K. Dziugaite, D. M. Roy, and M. Carbin. Linear mode connectivity and the lottery ticket hypothesis. arxiv 1912.05671, 2019

- P. M. Long and P. L. Bartlett. Sharpness-aware minimization and the edge of stability. Journal of Machine Learning Research, 2024

- J. M. Cohen, A. Damian, A. Talwalkar, J. Z. Kolter, and J. D. Lee. Understanding optimization in deep learning with central flows. arxiv 2410.24206, 2024

- S. Hochreiter and J. Schmidhuber. Flat minima. Neural Computation, 9(1):1-42, 1997

References

- [1] J. M. Cohen, S. Kaur, Y. Li, J. Z. Kolter, and A. Talwalkar. Gradient descent on neural networks typically occurs at the edge of stability. arxiv 2103.00065, 2021.

- [2] A. Damian, E. Nichani, and J. D. Lee. Self-stabilization: The implicit bias of gradient descent at the edge of stability. arxiv 2209.15594, 2022.

- [3] S. Arora, Z. Li, and A. Panigrahi. Understanding gradient descent on edge of stability in deep learning. arxiv 2205.09745, 2022.

- [4] K. Ahn, J. Zhang, and S. Sra. Understanding the unstable convergence of gradient descent. arxiv 2204.01050, 2022.

- [5] A. Lewkowycz, Y. Bahri, E. Dyer, J. Sohl-Dickstein, and G. Gur-Ari. The large learning rate phase of deep learning: The catapult mechanism. arxiv 2003.02218, 2020.

- [6] L. Dinh, R. Pascanu, S. Bengio, and Y. Bengio. Sharp minima can generalize for deep nets. arxiv 1703.04933, 2017.

- [7] N. S. Keskar, D. Mudigere, J. Nocedal, M. Smelyanskiy, and P. T. P. Tang. On large-batch training for deep learning: generalization gap and sharp minima. arxiv 1609.04836, 2016.