On flat minima

Whether flat minima generalize better than sharp ones has been an open question for about seven years. The debate seems to close every year and reopen a year later. Most readers entering the field encounter it as a settled topic in some textbook chapter, in one direction or the other, when in fact it isn't. This is where I think it actually stands.

Hochreiter, Schmidhuber, and the original intuition

Hochreiter and Schmidhuber introduced the idea in 1997: a minimum that sits in a broad, low-curvature valley should generalize better than one in a sharp valley, on roughly an MDL ground that the broad solution requires fewer bits to specify and is correspondingly less tied to the noise in any one training set. The intuition lay mostly dormant for the next two decades.

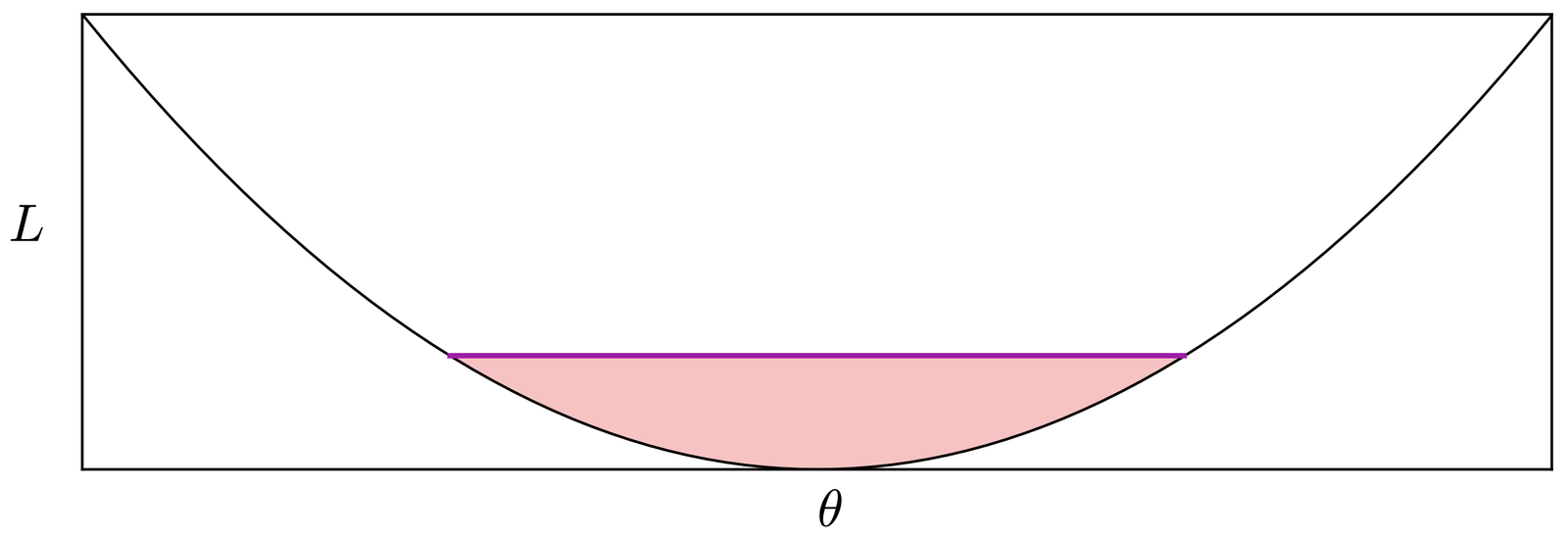

Keskar et al. [1] reignited the topic by reporting that large-batch SGD with $\theta_{t+1} = \theta_t - \eta g_t$ converges to sharper minima than small-batch SGD on a range of standard benchmarks, with a corresponding gap in test accuracy. The 1D schematic that came with that paper has done a lot of work since: it is the picture more or less every later flat-minima discussion is implicitly arguing about.

![Figure 1 of Keskar et al. [1]. A 1D schematic of a wide basin around one minimum and a narrow basin around another.](../img/keskar-fig1.png)

Dinh et al.'s objection, which should have ended the debate

Dinh et al. argued that this should have ended the debate. Sharpness, measured as $\lambda_{\max}(H)$ with $H = \nabla^{2} L$, is a property of the parameterization, not of the function the network represents. They show explicitly that for any minimum one can find a reparameterization $\theta \to \psi(\theta)$ that scales the Hessian eigenvalues $\lambda_i(H)$ to arbitrary values without changing the input-output map. The clean response to this would have been to drop the flat-minima paradigm. The community instead salvaged it by looking for sharpness measures that are invariant under reparameterization. The simplest of these comes from Dziugaite and Roy, who use a PAC-Bayes lens: define sharpness as the largest weight perturbation $\xi \sim \mathcal{N}(0, I)$ a minimum can absorb while keeping training loss $L(\theta)$ small. That measure is reparameterization-invariant by construction and correlates with generalization in their experiments.

SAM and where flatness wins

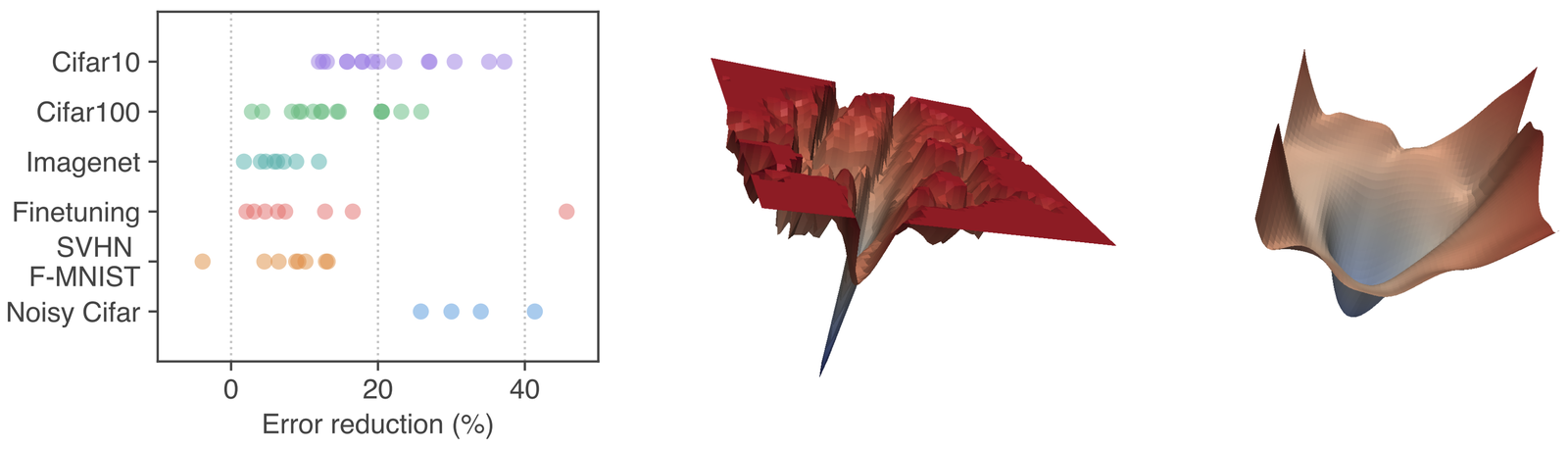

Building on Dziugaite and Roy's framing, Foret et al. [3] turned a reparameterization-aware sharpness measure into a training objective: minimize the worst-case loss in an $\ell_2$ ball around the current weights. They called the procedure SAM, and it does deliver consistent test-accuracy gains, particularly on architectures without strong built-in inductive biases (vanilla MLPs, plain ViTs without strong augmentation). Behnam Neyshabur, a co-author, has remained one of the more consistent public advocates for SAM as a generalization tool.

On the side that flatness improves generalization at scale, the main public voices are Behnam Neyshabur and collaborators across several papers and talks, and Boaz Barak's posts at Windows on Theory. Ferenc Huszár's inFERENCe is the other blog I keep coming back to on this; he writes carefully about flatness, generalization, and the Bayesian readings sitting under both. The Off-convex blog has good coverage of mode connectivity that puts flatness inside a larger geometric story, which I find more useful than treating it as an independent explanation. From the continuous-time view, the stochastic-diffusion picture of SGD is still the most direct way to see why noisy iterates concentrate near flatter minima. Huszár's adjacent essay "Everything that Works Works Because It Is Bayesian" is the prior-based reading I find most useful.

Stopping the timeline here, the flat-minima view would look basically validated: the naive Hessian definition was broken, but a reparameterization-invariant version of the phenomenon is real and SAM is a way to act on it. Kaddour et al. [4] complicate that picture. They sweep SAM and SWA against vanilla Adam across architectures and dataset scales, and report that the SAM gain shrinks as either model size or dataset size grows. In the regimes where generalization is most useful to improve, the advantage over a tuned Adam baseline narrows substantially.

I am left with an uneven picture. The naive Hessian definition of sharpness has no causal link to generalization (by Dinh). A reparameterization-invariant version does correlate with generalization at small-to-medium scale, and SAM produces real test-accuracy gains on architectures with weak inductive biases. At very large scale the correlation weakens and the SAM gain fades. I do not have a clean account of why. My guess is that with rich enough data and architectures, the optimizer's trajectory and the data distribution dominate whatever local geometry the final minimum has, and the landscape framing stops being the right description. That is speculation, and I would not put weight on it beyond that.

Further reading

- G. K. Dziugaite and D. M. Roy. Computing nonvacuous generalization bounds for deep (stochastic) neural networks with many more parameters than training data. arxiv 1703.11008, 2017

- X. Chen, C.-J. Hsieh, and B. Gong. When vision transformers outperform ResNets without pre-training or strong data augmentations. arxiv 2106.01548, 2021

- P. Izmailov, D. Podoprikhin, T. Garipov, D. Vetrov, and A. G. Wilson. Averaging weights leads to wider optima and better generalization. arxiv 1803.05407, 2018

- M. Wortsman et al. Model soups: averaging weights of multiple fine-tuned models improves accuracy without increasing inference time. arxiv 2203.05482, 2022

- S. Hochreiter and J. Schmidhuber. Flat minima

References

- [1] N. S. Keskar, D. Mudigere, J. Nocedal, M. Smelyanskiy, and P. T. P. Tang. On large-batch training for deep learning: Generalization gap and sharp minima. arxiv 1609.04836, 2016.

- [2] L. Dinh, R. Pascanu, S. Bengio, and Y. Bengio. Sharp minima can generalize for deep nets. arxiv 1703.04933, 2017.

- [3] P. Foret, A. Kleiner, H. Mobahi, and B. Neyshabur. Sharpness-aware minimization for efficiently improving generalization. arxiv 2010.01412, 2020.

- [4] J. Kaddour, L. Liu, R. Silva, and M. J. Kusner. When do flat minima optimizers work? arxiv 2202.00661, 2022.