Four explanations for Grokking

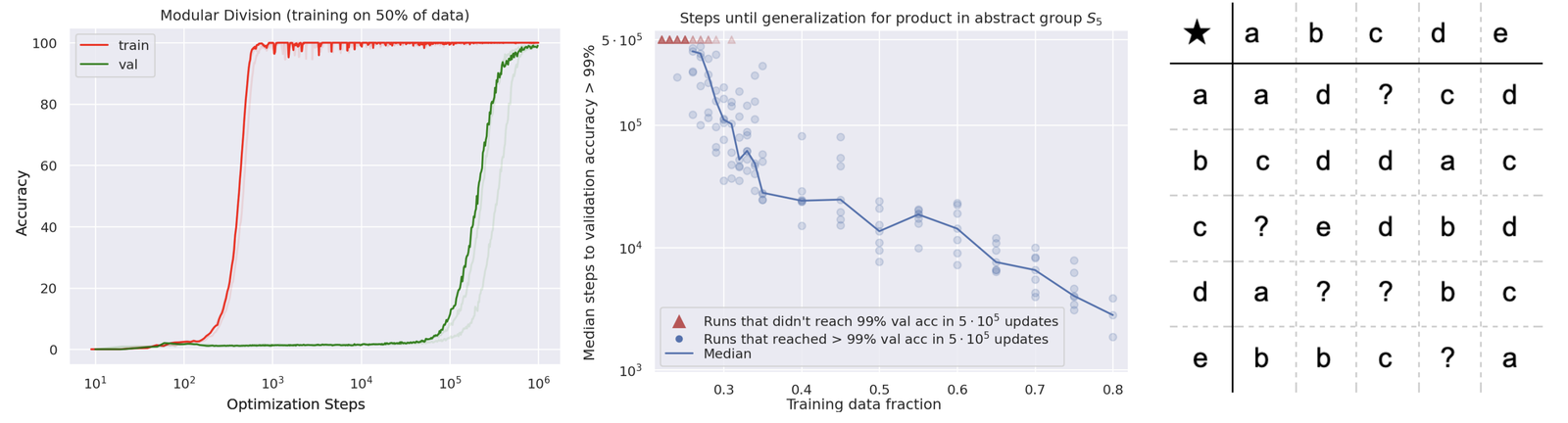

The network has generalized but long after it has already fit the data. The paper is Grokking: Generalization Beyond Overfitting on Small Algorithmic Datasets by Power et al. [1]. I came across it maybe a week after it was posted and didn't really know what to do with it for a while.

Why this is a puzzle

Standard stories about generalization do not predict a long delay. The VC and Rademacher story says generalization either happens or doesn't as a function of how well the hypothesis class matches the data distribution. The implicit-bias stories say SGD hits a minimum that generalizes but that minimum should be found at roughly the same time as convergence of training loss, not thousands of steps later.

Grokking says the loss landscape has a second stage not driven by training loss. Some other variable is moving the weights ($\theta$) during the stretch where the training loss is already near zero.

Four explanations

The first explanation - and the one that the original paper kind of points toward - is that weight decay ($\lambda \|\theta\|_2^2$) is a slow regularizer. The paper notes that grokking only happens with weight decay turned on. Once training loss is zero, the weight-decay term keeps pulling the norm of the weights down even if that loss gradient is tiny. This is slow drift toward a lower-norm solution which may be the one that generalizes. The second explanation comes via mechanistic interpretability.

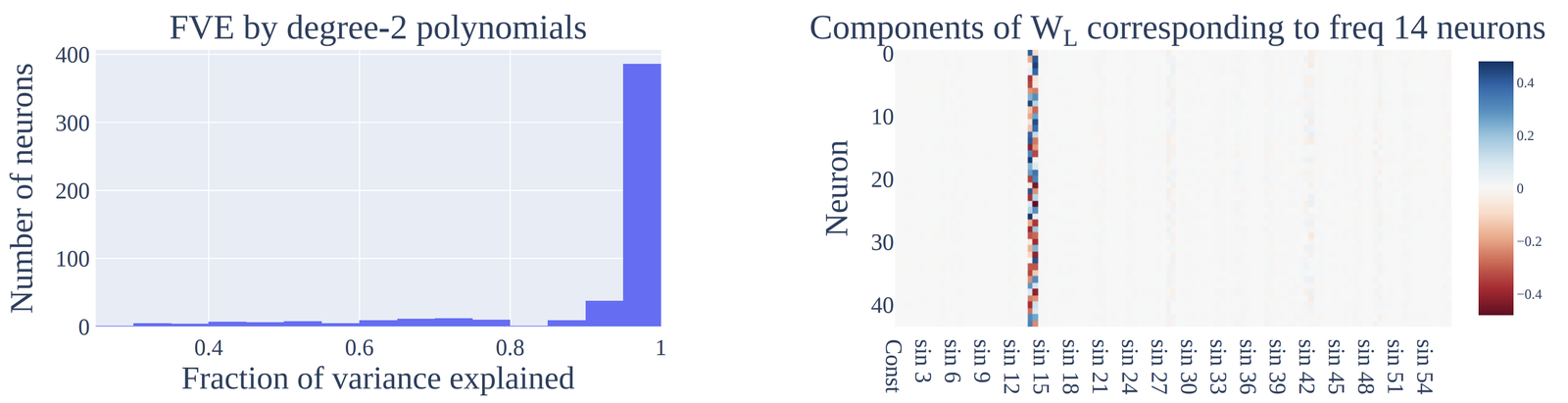

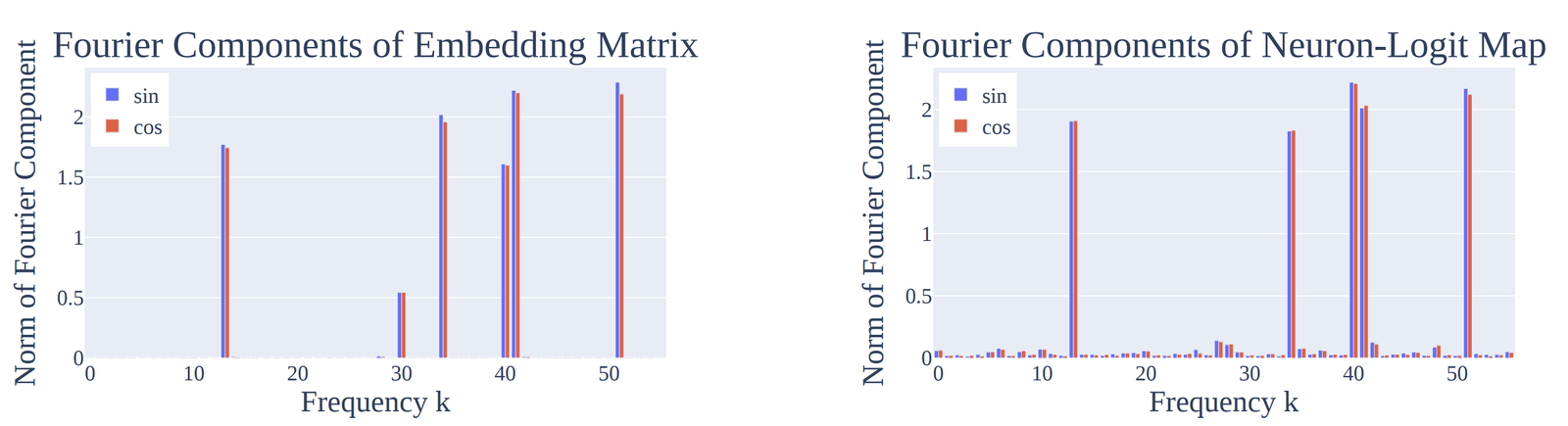

Neel Nanda and collaborators identified specific circuits inside the small grokking networks that implement modular arithmetic via a Fourier ($\hat{f}(\omega)$) decomposition. The grokking transition is when those circuits finish being assembled. Before the transition the network has to memorize by brute force. After the transition it can actually compute. The circuits-level framing this work builds on is laid out in Elhage et al., which is where I'd send anyone to understand what it could mean to talk about a 'circuit' inside a transformer.

The third explanation, due to Liu, Michaud, and Tegmark in Omnigrok, is geometric: the generalizing solution lies in a narrow "Goldilocks zone" of weight norms, and grokking is what you see when the optimizer has been started outside that zone and is slowly being walked into it. Weight decay is what does the walking, which is consistent with explanation one. The fourth angle is to step back and read all of this as a single process viewed at different mesh scales: grokking = double descent but resolved over training time rather than over model or dataset size.

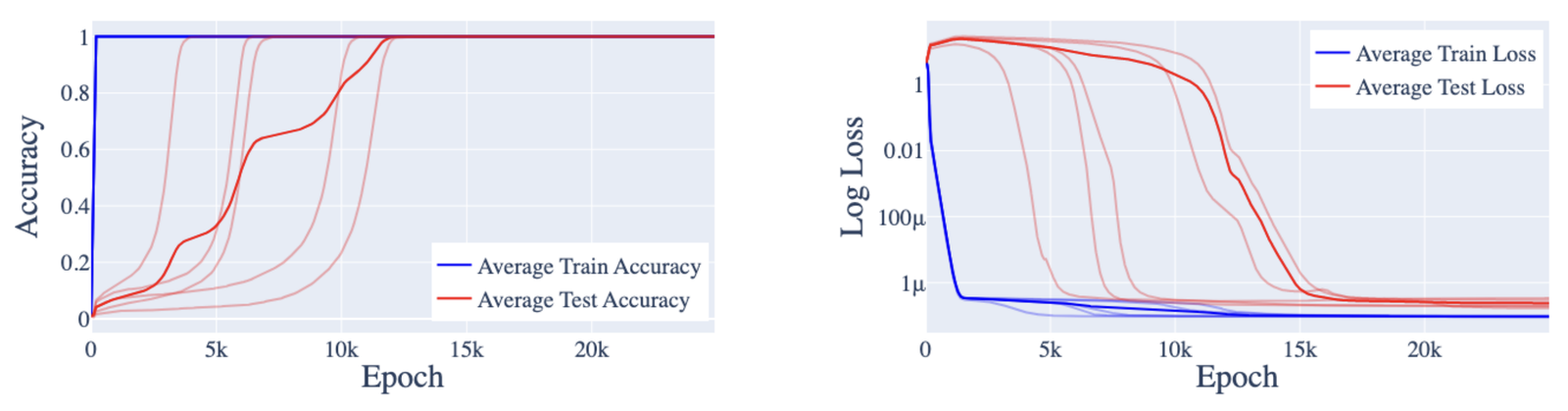

In this reading, grokking is double descent unfolding in time. The most lucid public articulations of this "double descent over time" reading come from Preetum Nakkiran's writing, and OpenAI's Deep Double Descent writeup paints the picture visually. On the mechanistic side, Neel Nanda wrote an intuitive walkthrough of the Fourier circuit story and maintains a corresponding paper page. Google PAIR's Do Machine Learning Models Memorize or Generalize? poses the same question in nearby visual vocabulary.

These four views are looking at the same puzzle from different angles, and together they read as one story at different levels of abstraction. Weight decay is the optimization pressure: it selects a minimum-norm interpolant, and in modular arithmetic that interpolant admits the Fourier circuit because Fourier captures the low-rank ($\text{rank}(W) \ll d$) structure of the task. The broader pattern of fast memorization followed by slow compression is the shape double descent takes when you resolve it over time instead of over model size.

The one thing none of these explanations cleanly accounts for is the abruptness of the transition. Smoothly shrinking the norm and smoothly assembling circuits should give smoothly rising validation accuracy, not a near-vertical jump. My read is that the sharpness is largely a measurement artifact: softmax classifiers, $\sigma(z)_j = e^{z_j}/\sum_k e^{z_k}$, route through a top-1 argmax, so logits that are evolving continuously map onto a piecewise-constant accuracy curve that flips once the right logit crosses its competitor.

So what's behind grokking?

— Neel Nanda (@NeelNanda5) January 21, 2023

Three phases of training:

1 Memorization

2 Circuit formation: It smoothly TRANSITIONS from memorising to generalising

3 Cleanup: Removing the memorised solution

Test performance needs a general circuit AND no memorisation so Grokking occurs at cleanup! pic.twitter.com/zLnP92RXKV

Further reading

- accessible walkthrough

- dedicated paper page

- N. Elhage et al. A mathematical framework for transformer circuits. transformer-circuits.pub/2021/framework, 2021

- Z. Liu, E. Michaud, and M. Tegmark. Omnigrok: Grokking beyond algorithmic data. arxiv 2210.01117, 2022

- V. Thilak, E. Littwin, S. Zhai, O. Saremi, R. Paiss, and J. Susskind. The slingshot mechanism: An empirical study of adaptive optimizers and the grokking phenomenon. arxiv 2206.04817, 2022

- M. Belkin, D. Hsu, S. Ma, and S. Mandal. Reconciling modern machine learning practice and the bias-variance trade-off. arxiv 1812.11118, 2018

- P. Nakkiran, G. Kaplun, Y. Bansal, T. Yang, B. Barak, and I. Sutskever. Deep double descent: Where bigger models and more data hurt. arxiv 1912.02292, 2019

- X. Davies, L. Langosco, and D. Krueger. Unifying grokking and double descent. arxiv 2303.06173, 2023

- L. Prieto, M. Barsbey, P. Mediano, and T. Birdal. Grokking at the edge of numerical stability. arxiv 2501.04697, 2025

- K. Lyu, J. Jin, Z. Li, S. S. Du, J. D. Lee, and W. Hu. Dichotomy of early and late phase implicit biases can provably induce grokking. arxiv 2311.18817, 2023

References

- [1] A. Power, Y. Burda, H. Edwards, I. Babuschkin, and V. Misra. Grokking: generalization beyond overfitting on small algorithmic datasets. arxiv 2201.02177, 2022.

- [2] N. Nanda, L. Chan, T. Lieberum, J. Smith, and J. Steinhardt. Progress measures for grokking via mechanistic interpretability. arxiv 2301.05217, 2023.