Reading Tishby's information bottleneck

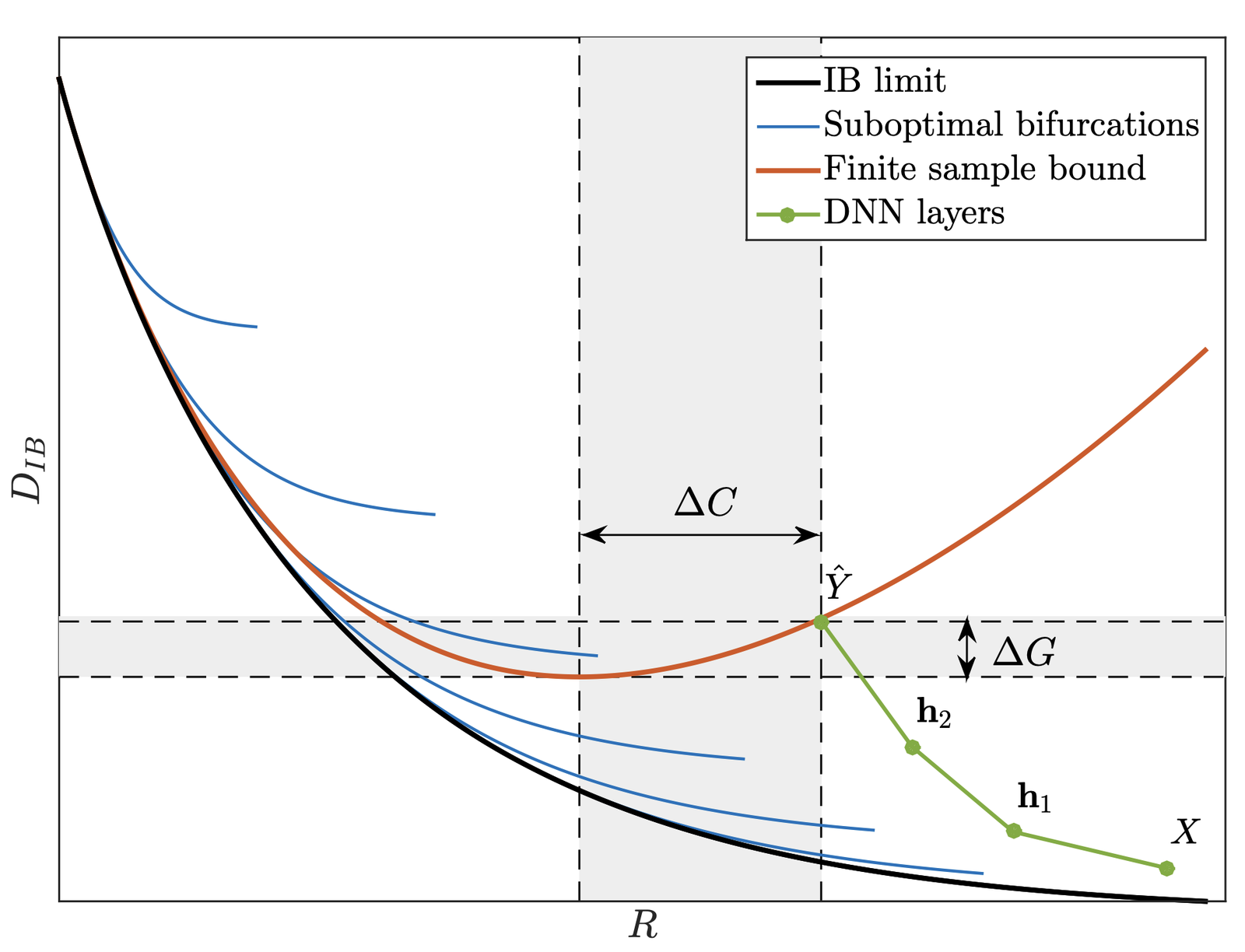

Tishby and Zaslavsky's 2015 paper was, until fairly recently, one of the most-cited papers in deep-learning theory. They described training as two distinct phases. In the first, the "fitting" phase, the mutual information between a hidden representation $T = f_\theta(X)$ and the input $X$ rises. In the second, the "compression" phase, the network discards parts of that input information that are not useful for the prediction.

The two strongest objections to that picture come from Saxe et al. [2], who argue the compression phase is an artifact of the activation function and the mutual-information estimator, and from Goldfeld et al. [3], who formalize the estimator critique and re-run the analysis with noisy networks where mutual information is well-defined. The point of this post is to reread Tishby with both of those objections in hand and ask what remains.

Both objections are worth reading in full; the summaries below are my best attempt to render them faithfully.

The actual claim of the original paper

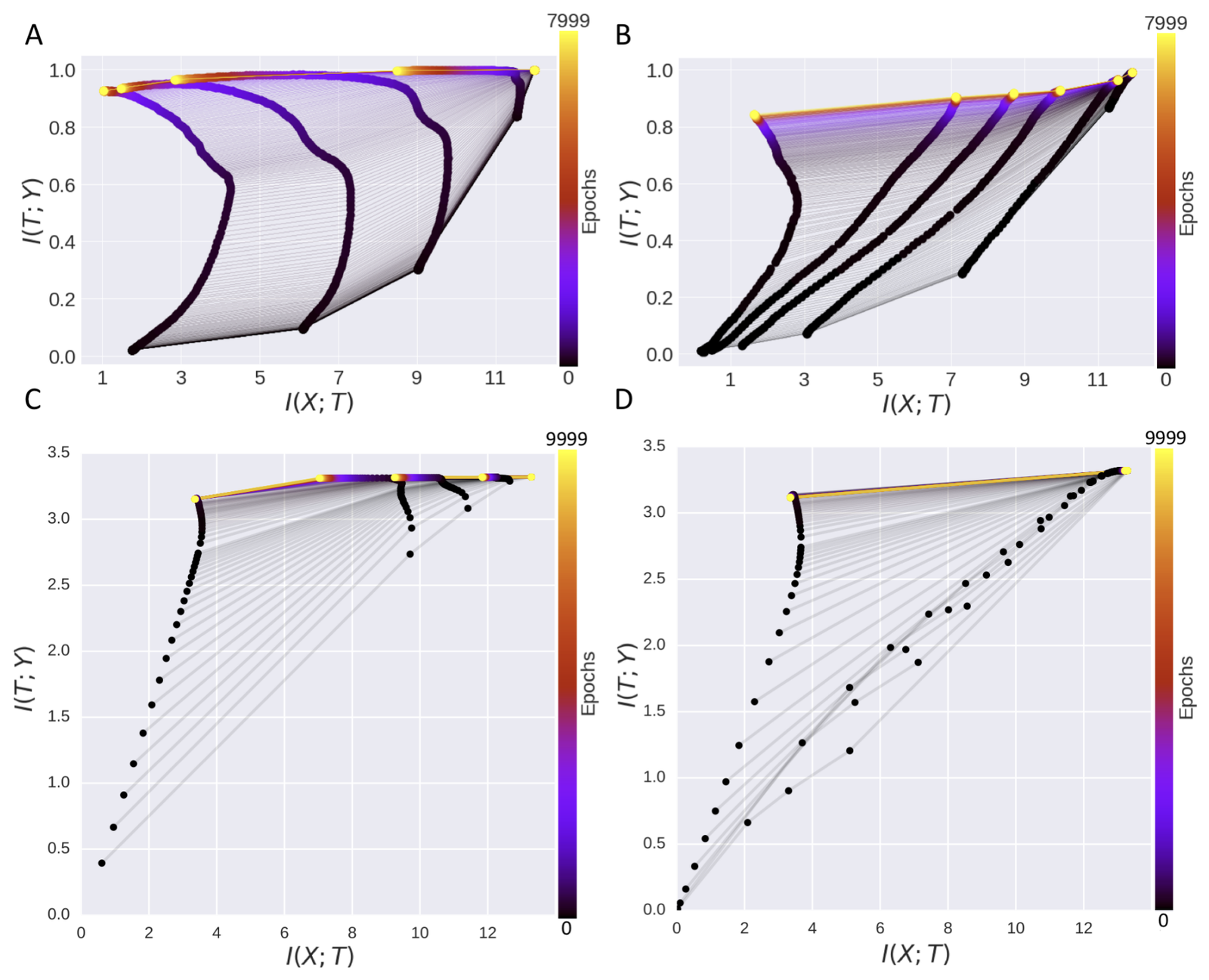

The claim, in plain language: early in training, a deep network builds up features that are useful for predicting the target $Y$, which shows up as $I(T; Y)$ increasing. During the same period $I(T; X)$ also increases, because the hidden layer is just retaining more about the input. Then a second phase kicks in: the network drops the parts of the input information that do not help with the prediction. That second phase is the "compression phase."

The empirical support was a small tanh network whose information-plane plot showed a clean fitting-then-compression trajectory.

Saxe et al. [2] examine the type of activation function used

Saxe and coauthors re-ran the experiments with ReLU instead of tanh. The compression phase did not appear: $\hat{I}(X;T)$ stayed roughly flat across training. Their explanation: tanh saturation pushes each unit's activations into a small number of values, the binning estimator is sensitive to that quantization, and the combination produces a curve that looks like compression but is really an estimator artifact. In a network without saturating units (or with a less binning-sensitive estimator), the curve is gone.

That is a serious problem for the original story. The theoretical pull of Tishby's framing was that compression looked universal, a property of deep learning itself. If it only shows up for one activation function with one estimator, the universality claim is much weaker.

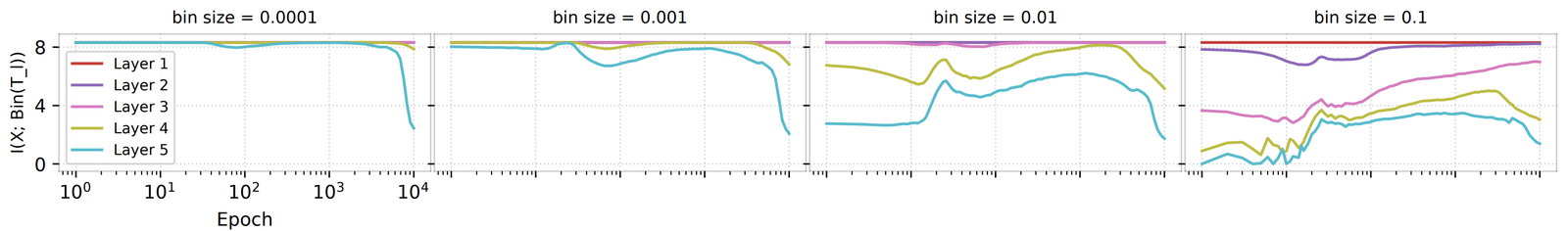

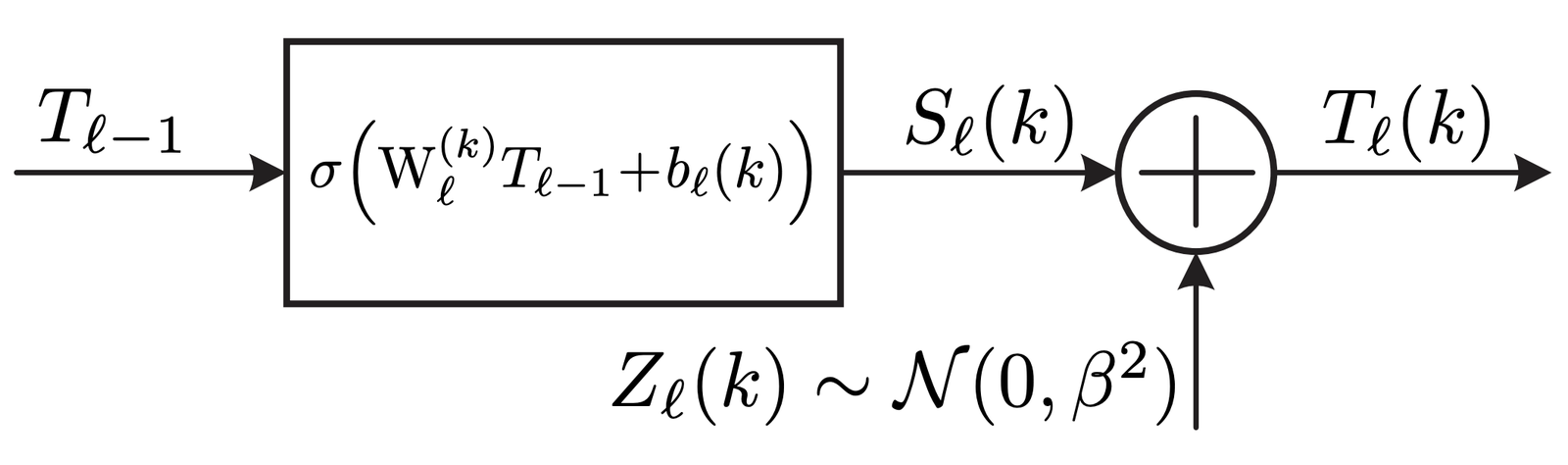

Goldfeld et al. formally quantify issues with estimator selection

Goldfeld and coauthors formalized what Saxe had observed. For continuous random variables and deterministic maps $T = f(X)$ from inputs to representations, mutual information $I(T;X) = H(T) - H(T|X)$ is not even well-defined: $H(T|X)$ collapses, $I(T;X)$ blows up, and the finite numbers showing up in published plots are entirely coming from the noise injected by the estimator (binning, added Gaussian noise of variance $\sigma^2$, or KDE). Different choices give different numbers, so the published information-plane trajectories were tracking properties of the estimator at least as much as properties of the network.

One reason Tishby's paper still has value despite the empirical claims being discredited is that it offered a third lens on generalization at a time when the dominant lenses were capacity-based (how restrictive or broad the hypothesis class is) and geometry-based (how smooth or rough the loss landscape is around a minimum). Tishby's lens was sufficient-statistics: representations should retain only the information that matters for the prediction task. The terminology stuck even though the original empirical observation did not, and modern self-supervised methods like infoNCE are essentially information-bottleneck objectives in everything but name.

The paper got most of its public reach through Natalie Wolchover's 2017 Quanta piece, "New Theory Cracks Open the Black Box of Deep Learning," which presented Tishby's claims in their strongest form. Reading that piece today is mostly useful as a reminder of how far ahead of the evidence the rhetoric got.

For readers who want non-paper summaries of this debate, Adrian Colyer's three-part Morning Paper series on Tishby's IB theory and Saxe's reply is the most accessible walkthrough.

A weaker version of the original claim does survive: networks trained with SGD often end up with representations that are sufficient for the labels and roughly insensitive to label-irrelevant input variation. "Information bottleneck" is a fine descriptive label for that. The strong version, in which training proceeds through two cleanly separated phases divided by a phase transition in $I(T;X)$, has no empirical support, and the further claim that SGD is implicitly minimizing an information-bottleneck objective remains unproven.

Some incorrect papers end up more useful to a field than correct ones, because they hand it vocabulary it did not have.

Saxe's argument alone is not fatal: a noisy version of the network has well-defined mutual information and can be analyzed directly, which gets you out of the estimator trap. Goldfeld et al. did exactly that and found the two-phase trajectory does not hold up across estimator choices once the quantities being plotted are well-defined. After that, the empirical case for Tishby's strong claims is essentially gone.

Further reading

- R. Shwartz-Ziv and N. Tishby. Opening the black box of deep neural networks via information. arxiv 1703.00810, 2017

- A. A. Alemi, I. Fischer, J. V. Dillon, and K. Murphy. Deep variational information bottleneck. arxiv 1612.00410, 2017

- A. van den Oord, Y. Li, and O. Vinyals. Representation learning with contrastive predictive coding. arxiv 1807.03748, 2018

- K. Kawaguchi, Z. Deng, X. Ji, and J. Huang. How does information bottleneck help deep learning? arxiv 2305.18887, 2023

References

- [1] N. Tishby and N. Zaslavsky. Deep learning and the information bottleneck principle. arxiv 1503.02406, 2015.

- [2] A. M. Saxe, Y. Bansal, J. Dapello, M. Advani, A. Kolchinsky, B. D. Tracey, and D. D. Cox. On the information bottleneck theory of deep learning. ICLR, 2018.

- [3] Z. Goldfeld, E. van den Berg, K. Greenewald, I. Melnyk, N. Nguyen, B. Kingsbury, and Y. Polyanskiy. Estimating information flow in deep neural networks. arxiv 1810.05728, 2019.