Lottery ticket hypothesis

The lottery-ticket hypothesis of Frankle and Carbin [1] proposes that a randomly initialized dense network already contains a much sparser subnetwork (the "winning ticket") which, trained in isolation from its original initialization, matches the dense network's accuracy. If true, this is a strong claim about how deep networks represent functions: optimization would be selecting structure that was already present at initialization, not creating new structure. The pruning literature had circled this idea before; Frankle and Carbin's contribution was an actionable procedure for finding these subnetworks.

In practice, what Frankle and Carbin actually did

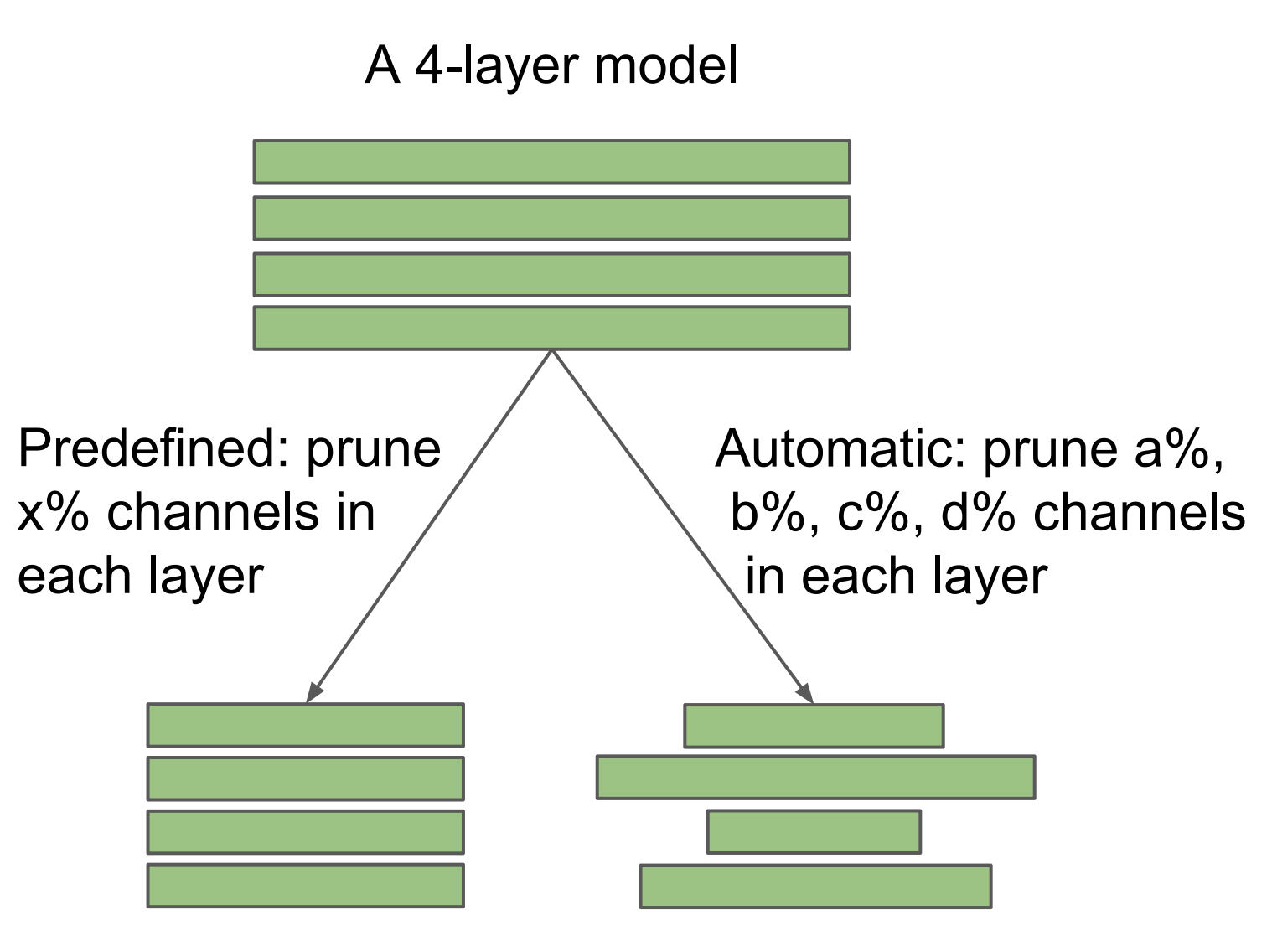

Frankle and Carbin defined a simple process to find these "winning" tickets. They called it iterative magnitude pruning. First train the full model until it converges. Remove the lowest-magnitude weights and freeze them. Take the remaining weights and reset them to their original initialization values. Train again. Continue this process several times, removing a portion of weights each time, until you cannot remove any more without losing performance relative to the full model. The resulting subnetwork is considered to be the "winning" ticket for that particular initial condition and dataset. The "rewinding" variant, resetting to weights from a few steps after initialization rather than to initialization itself, came later in Frankle's follow-up [8].

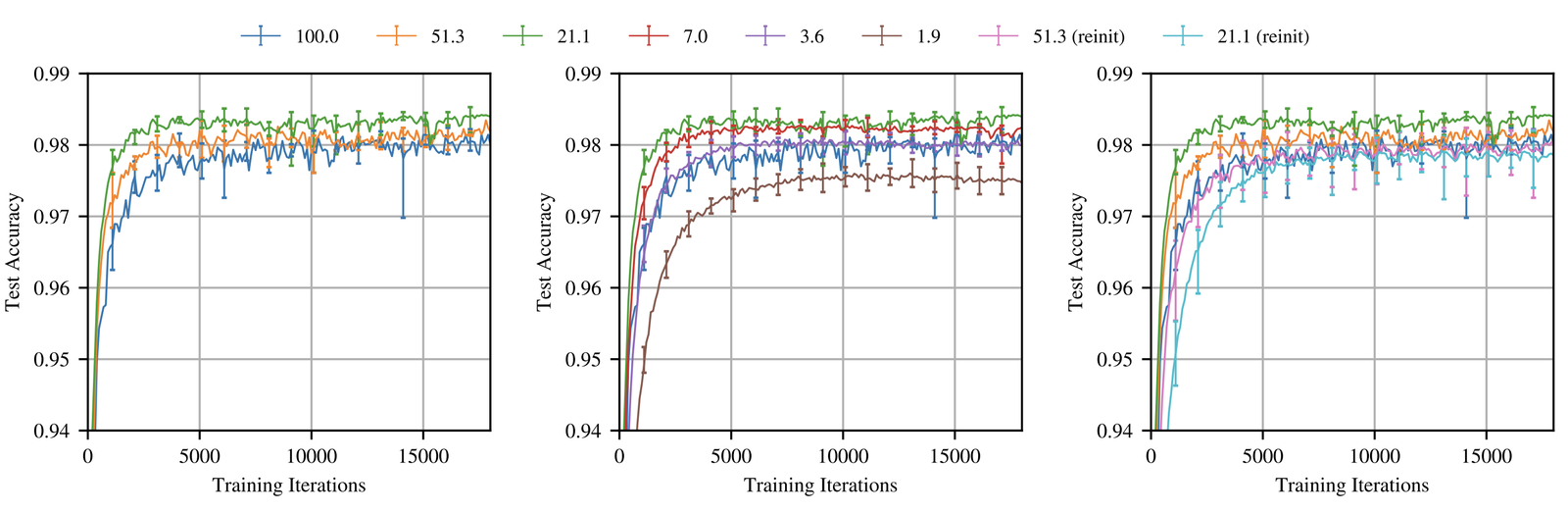

On MNIST and small CIFAR architectures, the results are clean. Sparse subnetworks at sparsities of a few percent match the dense network's accuracy. The winning tickets are also tied to a specific initialization: a ticket found from one random init does not transfer to a different one, which is why the procedure is read as discovering structure already present at initialization rather than creating it during training.

Liu [2]'s rebuttal

Liu et al. ran the same procedure at larger scale and found that the gap between a Frankle winning ticket and a fresh random initialization of the same architecture closes once the network is big enough. At ImageNet scale, the winning-ticket effect basically disappears. Read narrowly this refutes Frankle and Carbin's strongest claim, but read alongside the original paper it is more usefully a scaling result: the winning-ticket structure is real on small networks and dissolves as scale grows.

Rewinding fixes

Frankle's response to Liu was a follow-up paper [8] introducing a small change that brought the result back at scale: instead of resetting the surviving weights to their original initialization, reset them to the values they had a short distance into training ($w_{t+1}=w_t-\eta\nabla L$, $\eta$: step-size, $t$: current iteration). The rewind distance is a few hundred iterations on small models and a few epochs on ImageNet (around epoch 4 of 90 for ResNet-50, about 20k iterations). With rewinding, IMP works at ImageNet scale; rewind past that point and the matching subnetwork stops appearing. Renda et al. [3] tightened the recipe by comparing rewinding to plain fine-tuning across architectures and showing that rewinding only the learning-rate schedule matches or beats fine-tuning at fixed sparsity.

Softening the claims

The revised hypothesis is weaker than the original. The earlier claim was that the winning ticket exists at random initialization. The follow-up softens this: a short window of training (a few hundred iterations on small models, a few epochs on ImageNet) is enough to locate one. By the end of that window, something about the loss landscape $L(\theta)$ has been fixed that determines the rest of training, and from that point on a sparse subnetwork pulled out of the surviving weights matches the dense network.

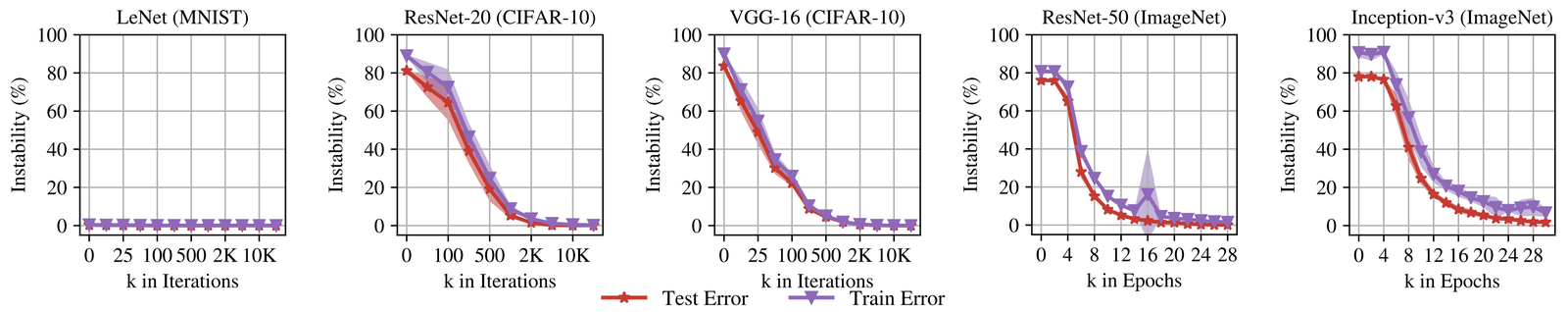

Why rewinding works

Frankle's companion paper offers an explanation. Early in training, two runs forked from the same initial weights end up in different basins $\mathcal{B}$, so linear interpolation between two such checkpoints crosses a high-loss barrier. After a small fraction of training (about 1000-2000 iterations on CIFAR-scale networks, roughly the first 3% of the schedule), two runs forked from the same starting weights end up in the same basin instead, and linear interpolation between them stays at low loss throughout. The crossover point is where rewinding starts to work.

The simplest framing of the lottery-ticket findings, given what is now understood about optimization and loss landscapes, is this: once a training run commits to a specific basin $\mathcal{B}$, there is a sparse sub-network within that basin that matches the dense network's performance. Frankle and Carbin's strongest claims fail at larger scales, but their weaker claims have so far held up under every replication that has tested them. This places lottery-ticket results in close alignment with the mode-connectivity literature [6], particularly its linear-mode-connectivity refinement [4] and the later permutation-based alignment work [7].

Davis Blalock's 2020 MLSys retrospective on pruning, together with the ShrinkBench benchmark he built, is the survey I keep returning to on what holds up after the Frankle-to-Liu exchange. Blalock separates the stronger and weaker forms of the hypothesis and argues that "checkpoint pruning" is a better name than "lottery ticket pruning" for the late-rewinding procedures that actually work at scale. Google's State of Sparsity and Rigging the Lottery are the other two retrospectives I keep going back to.

The piece I keep coming back to is that mainstream theory has not picked up the rewinding point as an object in its own right. If someone could pin down precisely when basin-membership becomes determined, that would identify a structural feature of the loss landscape current frameworks do not explain. Lewkowycz's catapult-phase work [5] and the edge-of-stability literature look like they are circling the same phenomenon from different directions, and the lottery-ticket case is the most direct entry point for tying those threads together.

How do the lottery ticket hypothesis and the loss landscape relate? Winning lottery tickets always find the same, linearly-connected optimum. Check out our (@KDziugaite, @roydanroy, @mcarbin) poster at the SEDL workshop (West 121) and our new paper https://t.co/V9yKTSrNnh pic.twitter.com/uPwQKifo1W

— Jonathan Frankle (@jefrankle) December 14, 2019

Further reading

- The State of Sparsity in DNNs

- Rigging the Lottery

- J. Frankle, G. K. Dziugaite, D. M. Roy, and M. Carbin. Pruning neural networks at initialization: Why are we missing the mark? arxiv 2009.08576, 2020

- T. Kumar, K. Luo, and M. Sellke. No free prune: information-theoretic barriers to pruning at initialization. ICML, 2024

References

- [1] J. Frankle and M. Carbin. The lottery ticket hypothesis: Finding sparse, trainable neural networks. arxiv 1803.03635, 2018.

- [2] Z. Liu, M. Sun, T. Zhou, G. Huang, and T. Darrell. Rethinking the value of network pruning. arxiv 1810.05270, 2018.

- [3] A. Renda, J. Frankle, and M. Carbin. Comparing rewinding and fine-tuning in neural network pruning. arxiv 2003.02389, 2020.

- [4] J. Frankle, G. K. Dziugaite, D. M. Roy, and M. Carbin. Linear mode connectivity and the lottery ticket hypothesis. arxiv 1912.05671, 2019.

- [5] A. Lewkowycz, Y. Bahri, E. Dyer, J. Sohl-Dickstein, and G. Gur-Ari. The large learning rate phase of deep learning: The catapult mechanism. arxiv 2003.02218, 2020.

- [6] T. Garipov, P. Izmailov, D. Podoprikhin, D. Vetrov, and A. G. Wilson. Loss surfaces, mode connectivity, and fast ensembling of DNNs. arxiv 1802.10026, 2018.

- [7] S. K. Ainsworth, J. Hayase, and S. Srinivasa. Git re-basin: Merging models modulo permutation symmetries. arxiv 2209.04836, 2022.

- [8] J. Frankle, G. K. Dziugaite, D. M. Roy, and M. Carbin. Stabilizing the lottery ticket hypothesis. arxiv 1903.01611, 2019.