Kaplan, Chinchilla, and broken laws

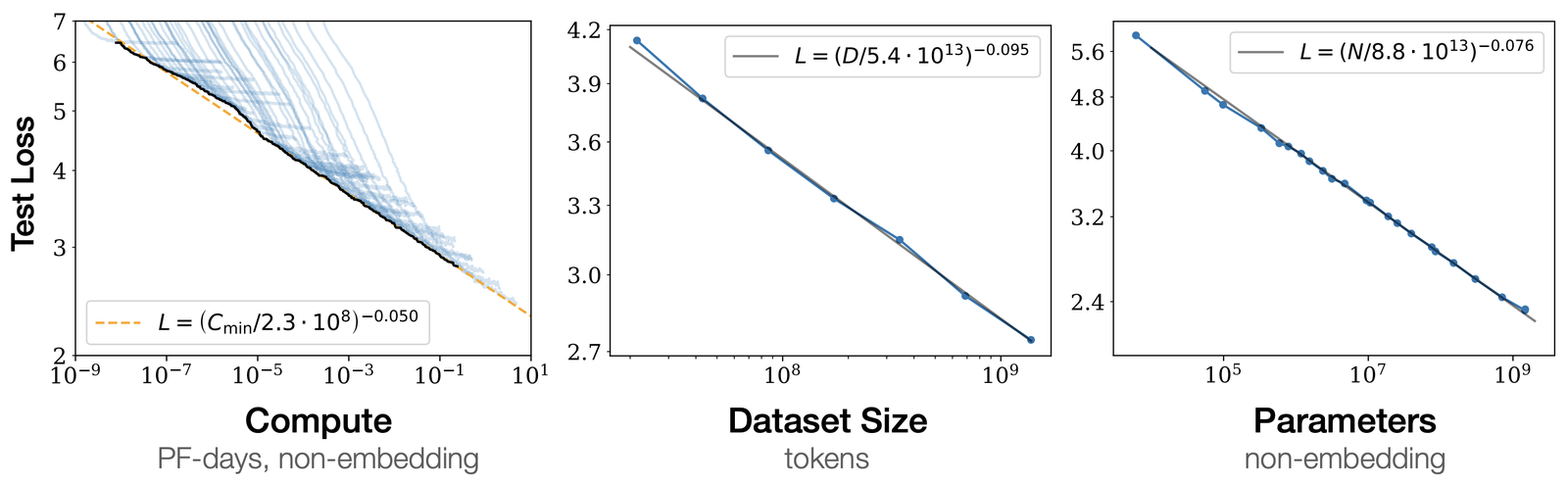

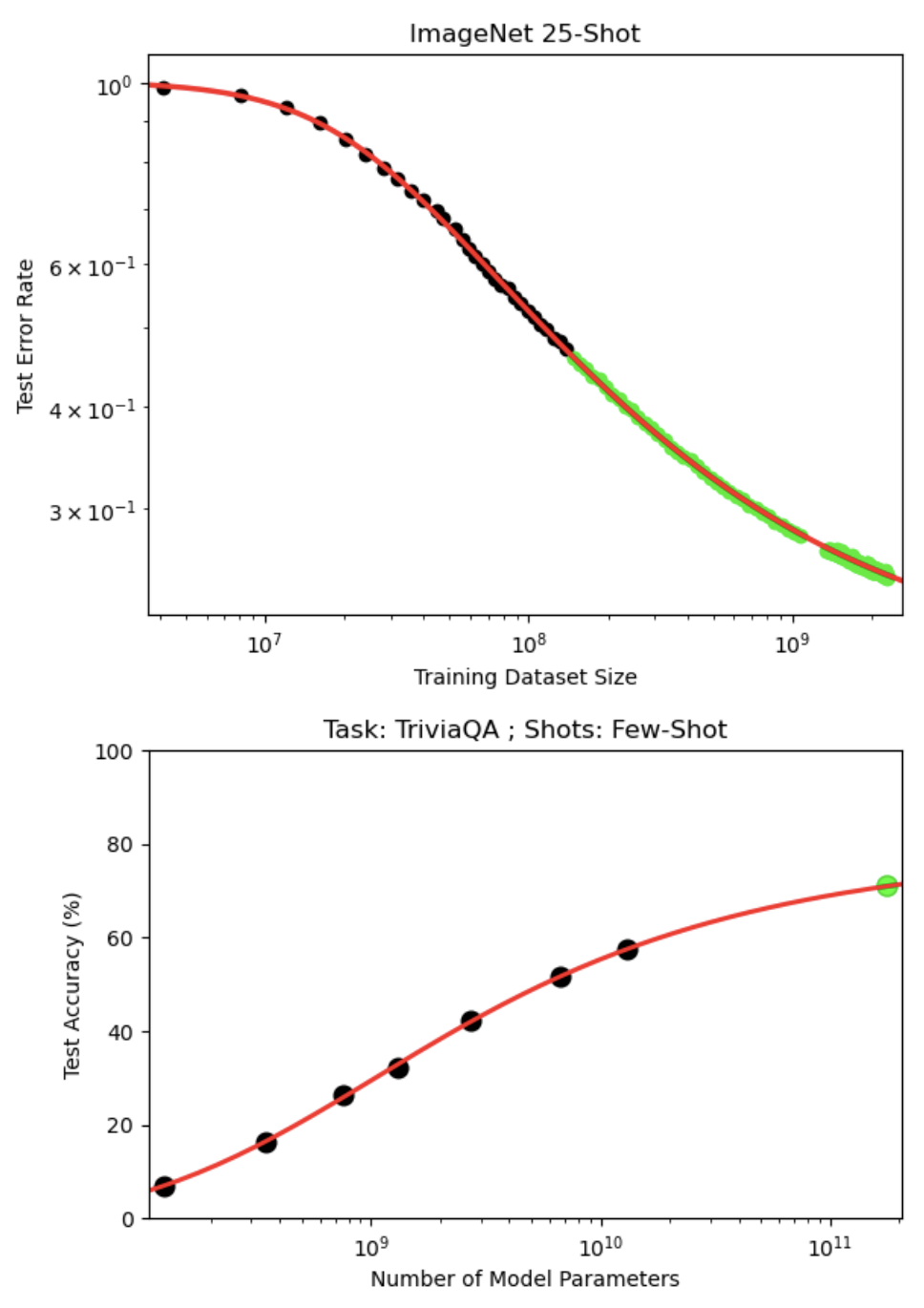

Kaplan et al. [1] set the baseline picture: test loss falls as a power law $L(C) = (C/C_0)^{-\alpha_C}$ in each of model size $N$, dataset size $D$, and compute $C$, with clean exponents $\alpha$ that hold over many orders of magnitude. They also claimed that for a given compute budget, there is an optimal allocation between $N$ and $D$, and specifically that $N$ should grow faster than $D$ as $C$ grows.

That paper shaped how labs designed pre-training experiments for the next two years, and the eventual "Chinchilla" effort grew out of trying to reproduce and extend its recommendations. It also turned out to be wrong about the optimal $D/N$ ratio, which is the part the field then had to revise.

Chinchilla

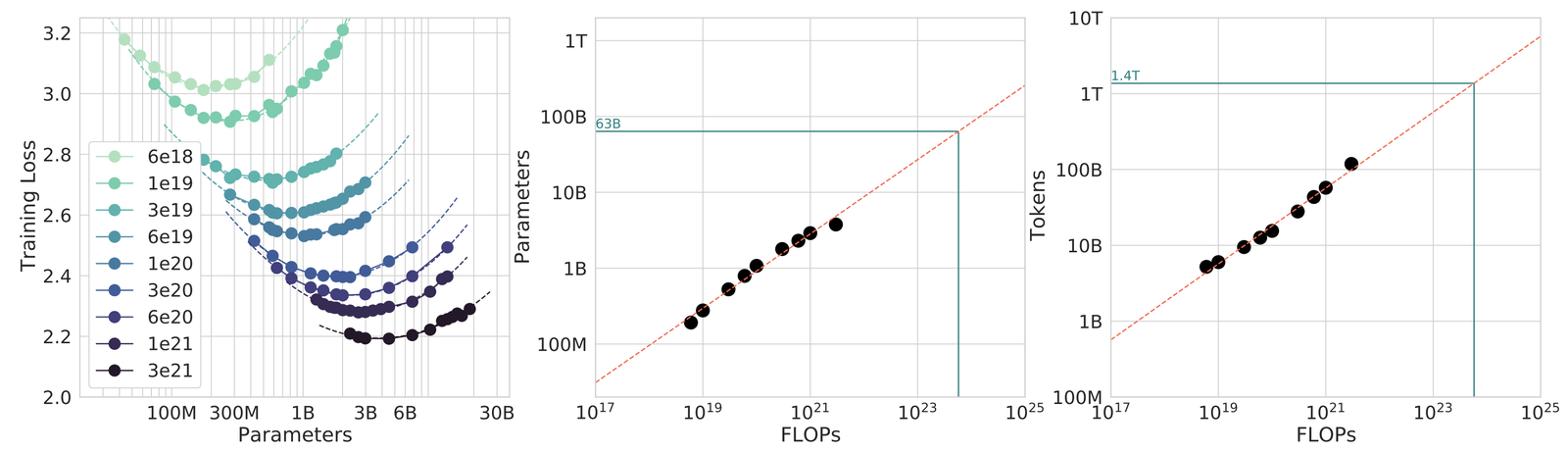

Hoffmann et al. [2] re-asked Kaplan's question with a larger, better-controlled experiment: over 400 language models from 70 million to over 16 billion parameters, trained on 5 to 500 billion tokens, across a sweep of compute budgets. Fitting a joint regression over model size and tokens gave them per-budget optima $N^{\star}(C), D^{\star}(C)$ that were not where Kaplan put them. Their headline rule is that for each doubling of model size, dataset size should also double, which lands at roughly 20 tokens per parameter at the compute-optimal point.

The shift in conclusions came from a methodological gap. Hoffmann et al. argue that Kaplan's runs were too short: they ended well before each model had seen enough tokens to bottom out its loss. Kaplan's reported optima were therefore extrapolations from incomplete training curves, while Hoffmann's were drawn from runs that continued long enough to actually locate the per-size minimum. The two sets of optima ended up substantially different.

The effects of correcting Kaplan

GPT-3 and similarly Kaplan-trained models were trained on substantially too little data for their size. Chinchilla shows that for a fixed compute budget, a smaller model trained on more tokens reaches a lower loss than a bigger model trained on fewer. Concretely, Chinchilla-70B is roughly 2.5x smaller than GPT-3 (175 billion parameters) and outperforms it on nearly every benchmark in the original paper. Many factors contribute to that gap, but the dominant one is that Chinchilla-70B is configured at the Chinchilla-optimal point for its compute budget while GPT-3 sits at the Kaplan-optimal point. Subsequent scaling-law work that calibrates against the Chinchilla target accordingly emphasizes data scaling, longer training, and less aggressive model-size growth.

Llama 2 was trained on 2 trillion tokens, well past the Chinchilla-optimal breakpoint and into a regime where the relevant trade-off is no longer training compute but inference cost. Once it became clear that smaller models are much cheaper to run at inference, scaling-law work shifted target from training-compute optimality to deployment optimality.

Broken laws

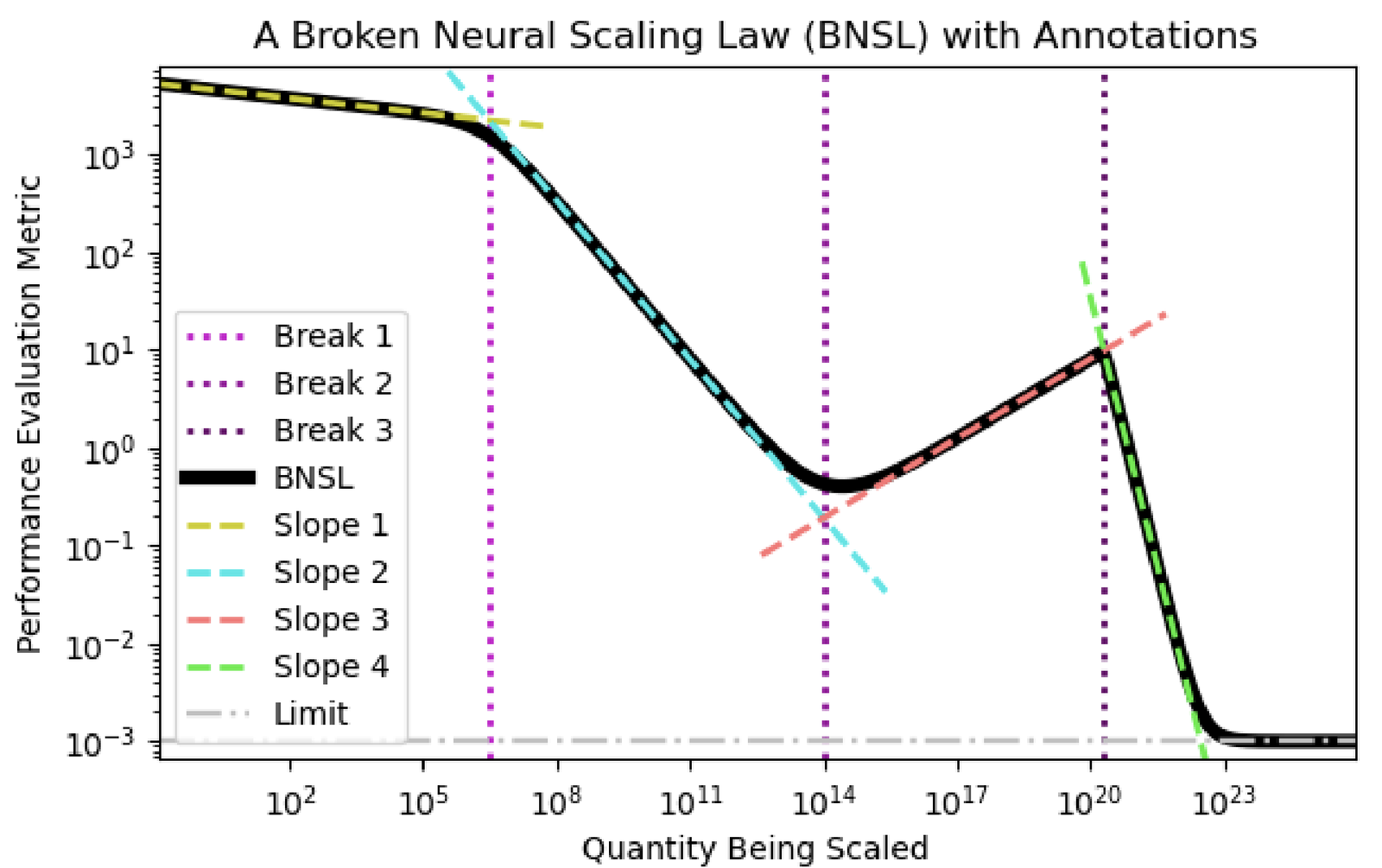

Caballero et al. [3] argue that the single-power-law narrative is wrong. Loss versus compute, in their fit, is a smoothly-broken power law: continuous everywhere, with several breaks where the slope changes. Kaplan and Chinchilla's single-exponent fits are then averaging across regimes with different exponents and producing a number that matches none of them.

Whether the broken-laws view is useful depends on what you want the fit for. For coarse extrapolation across orders of magnitude in compute, a single power law still works fine. For predicting at what scale a particular capability shows up, it does not, and the Caballero breakpoints line up with the "emergent" capabilities $\mathbb{1}[L < L_{\text{threshold}}]$ Wei et al. [4] documented, which Schaeffer et al. [5] then argued are largely an artifact of how the underlying metric is discretized.

Gwern's Scaling Hypotheses essay and the Revisited follow-up are the strongest non-specialist treatments of the underlying premise that capabilities come out of scale. Jacob Steinhardt's Bounded Regret is the blog I send people to when they want a careful read of what scaling laws actually let you predict. Beyond Chinchilla-Optimal is the most direct argument that the 20-tokens-per-parameter ratio is not a universal constant.

Kaplan, Chinchilla, and Caballero all show that with a reasonably behaved architecture, a reasonable data mixture, and enough compute to get past the early warmup phase of pre-training, you can extrapolate loss from small runs to larger ones with usable accuracy. Error bars widen as the extrapolation gets more aggressive but not catastrophically. That predictive ability is what labs use to decide whether an expensive training run is worth doing.

None of the papers here support any particular exponent or ratio as universal. Exponents depend on architecture, data mixture, and optimizer. The 20-tokens-per-parameter Chinchilla figure is one point estimate for one such combination, not a law of physics.

Almost the entire scaling-law literature addresses test loss on the training distribution, and nothing else. It provides zero guidance on what data mixture to choose, what architecture will surpass dense transformers $f_\theta$, what capabilities will appear at what scale, whether the resulting model will be safe, or how optimization will interact with the learning-rate schedule $\eta(t)$. All of those are properties of individual training runs and live outside the fitted relationship. Treating scaling laws as though they answered them is a common failure mode, and much of the broken-laws literature is about that failure. Scaling laws tell you how much loss you will incur. Almost everything interesting about a model is invariant to that single number.

Further reading

- Scaling Data-Constrained Language Models

- When is Scale Enough?

- Simons talk on the theoretical side of scaling

- T. Henighan et al. Scaling laws for autoregressive generative modeling. arxiv 2010.14701, 2021

- T. Pearce and J. Song. Reconciling Kaplan and Chinchilla scaling laws. arxiv 2406.12907, 2024

- N. Sardana, J. Portes, S. Doubov, and J. Frankle. Beyond Chinchilla-Optimal: Accounting for inference in language model scaling laws. ICML, 2024

- Deriving neural scaling laws from the statistics of natural language. arxiv 2602.07488, 2026

References

- [1] J. Kaplan, S. McCandlish, T. Henighan, T. B. Brown, B. Chess, R. Child, S. Gray, A. Radford, J. Wu, and D. Amodei. Scaling laws for neural language models. arxiv 2001.08361, 2020.

- [2] J. Hoffmann et al. Training compute-optimal large language models. arxiv 2203.15556, 2022.

- [3] E. Caballero, K. Gupta, I. Rish, and D. Krueger. Broken neural scaling laws. arxiv 2210.14891, 2022.

- [4] J. Wei et al. Emergent abilities of large language models. arxiv 2206.07682, 2022.

- [5] R. Schaeffer, B. Miranda, and S. Koyejo. Are emergent abilities of large language models a mirage? arxiv 2304.15004, 2023.