Adversarial examples and the robustness story that survived

Key terms used in this post

- adversarial example

- an input changed by a small worst-case perturbation that causes a model to make an incorrect prediction.

- robust optimization

- training against the worst perturbation inside an allowed set, usually written as an inner maximization problem.

- non-robust feature

- a predictive feature that is useful for standard accuracy but brittle under small human-imperceptible perturbations.

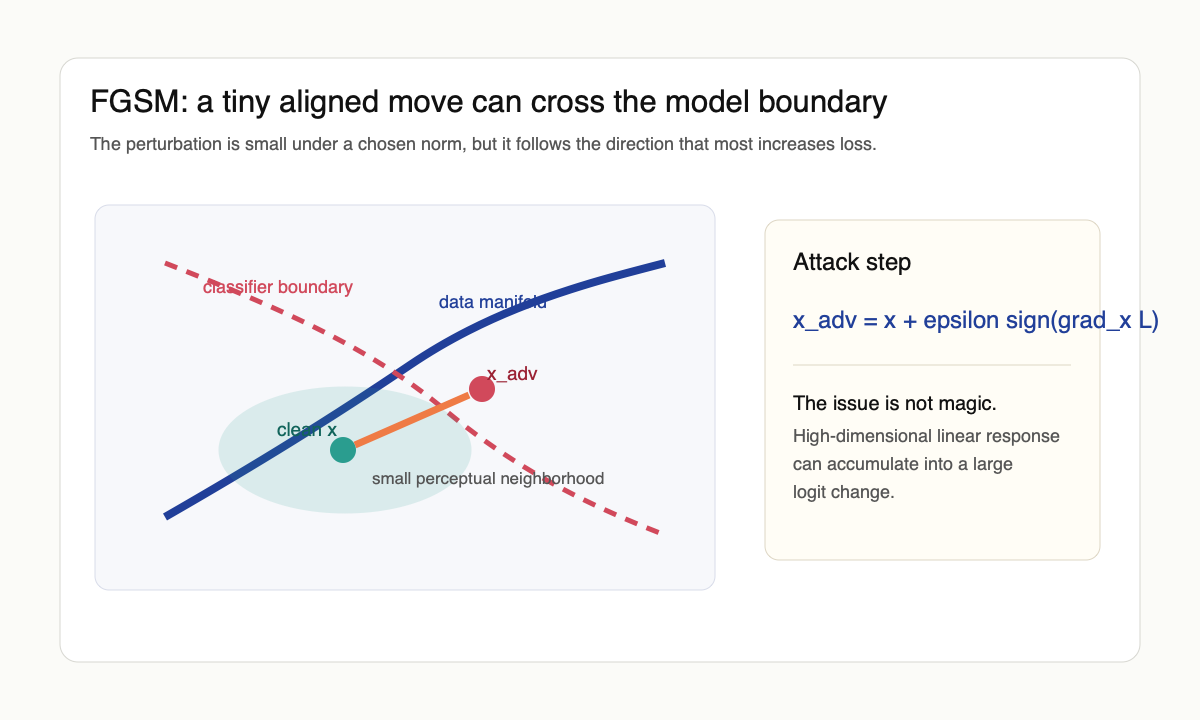

Adversarial examples first looked like a bizarre defect. Szegedy et al. [1] showed that a tiny perturbation, visually meaningless to a human, could flip a neural network's prediction with high confidence. The first temptation was to blame weird nonlinearities or overfitting. Goodfellow, Shlens, and Szegedy [2] offered a simpler explanation: a linear model with weight $w \in \mathbb{R}^d$ has logit shift $w^\top \delta$ under perturbation $\delta$, and the worst $\ell_\infty$ shift inside $\|\delta\|_\infty \le \epsilon$ is $\epsilon \|w\|_1$, which scales like $\epsilon \sqrt{d}$ when weights are dense. In a thousand-dimensional input space even an imperceptible $\epsilon$ accumulates into a large logit change. Adversarial examples were, in this reading, less a sign of pathological non-linearity than a generic phenomenon of high-dimensional linear decision rules.

The fast-gradient-sign method made the point operational. Given an input $x$, label $y$, and loss $L$, one can form

$$x_{\mathrm{adv}} = x + \epsilon \, \mathrm{sign}(\nabla_x L(\theta, x, y)).$$

This is a single-step linearized attack on the inner maximum $\max_{\|\delta\|_\infty \le \epsilon} L(\theta, x+\delta, y)$. The fact that it works at all is the clue. The model is sensitive in directions that the data distribution and human perception do not treat as meaningful. Carlini and Wagner [7] later showed that more carefully tuned objectives produced much smaller-norm perturbations than FGSM, breaking many defenses that had only been evaluated against single-step attacks. The lesson is structural: a defense that fails against a smarter attack inside the same threat model was never robust to begin with.

The robust-optimization turn

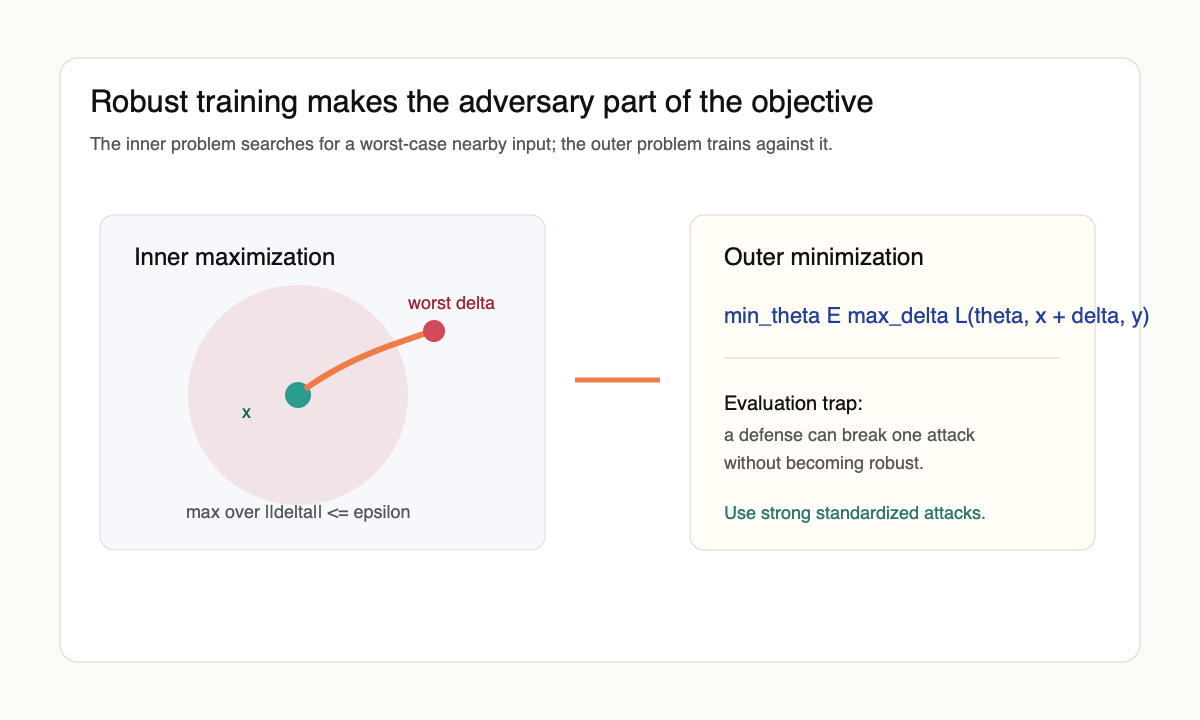

Madry et al. [3] turned adversarial training into a robust-optimization problem. Instead of minimizing the ordinary loss, train against the worst perturbation inside a ball:

$$\min_\theta \mathbb{E}_{(x,y)} \left[\max_{\|\delta\| \le \epsilon} L(\theta, x+\delta, y)\right].$$

This formulation mattered because it separated two questions that had been mixed together. The inner problem defines the adversary. The outer problem defines the trained model. Projected gradient descent then becomes both an attack and a training procedure. It is not perfect, but it made robustness measurable enough that defenses could be compared honestly.

The field then learned a painful lesson: many proposed defenses were not robust; they only broke weak attacks. Athalye, Carlini, and Wagner [8] cataloged the failure modes under one name: obfuscated gradients. Stochastic preprocessing, non-differentiable transforms, exploding/vanishing gradients, and gradient shattering each produce attacks that fail without producing classifiers that survive. They broke seven of the nine ICLR-2018 defenses they tested by replacing the attack with a stronger one inside the same threat model. AutoAttack [6] generalized that lesson into a parameter-free ensemble of attacks, which made evaluation less dependent on hand-tuning and exposed many inflated robustness claims at once.

The other branch of progress was certified rather than empirical. Cohen, Rosenfeld, and Kolter [10] gave randomized-smoothing certificates: convolve the classifier with isotropic Gaussian noise of variance $\sigma^2$, and the smoothed classifier $g(x) = \arg\max_c \mathbb{P}_{\eta \sim \mathcal{N}(0,\sigma^2 I)}[f(x+\eta)=c]$ is robust within an $\ell_2$ ball of radius $\sigma \Phi^{-1}(p_A)$, where $p_A$ is the lower confidence bound on the top class probability. The certificate is provable. The cost is that the radius is small in practice and only natural in $\ell_2$.

For a less paper-indexed entry point, the Gradient Science adversarial robustness page is still one of the cleanest maps of attacks, defenses, and the evaluation traps. RobustBench is the practical scoreboard once the question becomes: does this defense survive standard attacks?

View Ian Goodfellow's adversarial-examples discussion on X

The accuracy trade-off

Tsipras et al. [4] made the uncomfortable point that robustness can be at odds with standard accuracy. In their construction, the standard classifier uses weak but highly predictive features that are not robust. The robust classifier must ignore them and therefore loses ordinary accuracy. The empirical version of this trade-off is more complicated, but the conceptual point survived: robustness is not merely accuracy with extra caution. It can require learning different features.

Ilyas et al. [5] then gave the cleanest slogan: adversarial examples are not bugs, they are features. Their claim was not that every attack direction is semantically meaningful to humans. It was that standard datasets contain predictive signals that models can use and humans do not perceive as robust evidence. Adversarial perturbations exploit those signals. Robust training suppresses them.

Schmidt et al. [9] sharpened the cost in statistical terms: the sample complexity of robust learning can be polynomially larger than the sample complexity of standard learning even in benign settings. In their Gaussian model, $\Theta(d)$ samples suffice for standard accuracy and $\Theta(d/\epsilon^2)$ samples are needed for robust accuracy at $\ell_\infty$ radius $\epsilon$. That gap is not a bug of the algorithm; it is a property of the learning problem. Robustness is paying for invariance, and invariance has a sample-complexity price.

Engstrom et al. [11] then ran the experiment in the other direction: representations from robust classifiers transfer better than standard ones on a range of downstream tasks, look more semantically aligned in feature visualization, and yield gradients that resemble human-perceptible objects. Robustness, in this reading, is also a representation-learning prior. Whether the prior helps or hurts depends on the downstream task, but it is not free of structure.

Why the story mattered

The adversarial-examples literature forced a distinction between predictive validity and human-aligned validity. A feature can be statistically real, useful for test accuracy, and still unacceptable under a robustness constraint. That is a deeper issue than security. It means the supervised-learning objective does not fully specify the invariances we care about.

This is also why adversarial robustness connects to representation learning. Robust models often learn gradients and saliency maps that look more aligned with human perception. They may sacrifice some ordinary accuracy, but the features they use are different. Whether that difference is worth the cost depends on the domain. In medical imaging, robotics, or safety-critical perception, it often is. In low-stakes classification, it may not be.

What survived

The part that survived is not the hope of a simple universal defense. The robust-learning problem remains expensive, threat-model-dependent, and easy to evaluate badly. What survived is the feature story. Standard training will use any predictive signal the data offers. Human perception defines a different equivalence class over inputs. When those two geometries disagree, adversarial examples appear.

That makes adversarial examples less like an anomaly and more like a microscope. They reveal which features the model is actually using. The image may look unchanged to us, but the classifier is not us. It lives in the geometry created by the dataset, architecture, and loss. Robustness is the attempt to make that geometry answerable to a stronger notion of sameness.

The honest reading in 2026 is that "non-robust feature" was a useful renaming of an old statistical fact: predictive validity in distribution is not causal structure, and a model that maximizes the former will exploit signals the latter does not endorse. Adversarial training is one way to fold a robustness constraint into the objective. The cleaner long-term intervention is on the data side: collect or augment so that the equivalence classes the human cares about are the equivalence classes the dataset enforces. That is harder, slower, and less fashionable than a new training algorithm, but it is what would actually close the gap rather than paper over it.

References

- [1] C. Szegedy, W. Zaremba, I. Sutskever, J. Bruna, D. Erhan, I. Goodfellow, and R. Fergus. Intriguing properties of neural networks. arxiv 1312.6199, 2013.

- [2] I. J. Goodfellow, J. Shlens, and C. Szegedy. Explaining and harnessing adversarial examples. arxiv 1412.6572, 2014.

- [3] A. Madry, A. Makelov, L. Schmidt, D. Tsipras, and A. Vladu. Towards deep learning models resistant to adversarial attacks. arxiv 1706.06083, 2017.

- [4] D. Tsipras, S. Santurkar, L. Engstrom, A. Turner, and A. Madry. Robustness may be at odds with accuracy. arxiv 1805.12152, 2018.

- [5] A. Ilyas, S. Santurkar, D. Tsipras, L. Engstrom, B. Tran, and A. Madry. Adversarial examples are not bugs, they are features. arxiv 1905.02175, 2019.

- [6] F. Croce and M. Hein. Reliable evaluation of adversarial robustness with an ensemble of diverse parameter-free attacks. arxiv 2003.01690, 2020.

- [7] N. Carlini and D. Wagner. Towards evaluating the robustness of neural networks. arxiv 1608.04644, 2016.

- [8] A. Athalye, N. Carlini, and D. Wagner. Obfuscated gradients give a false sense of security: circumventing defenses to adversarial examples. arxiv 1802.00420, 2018.

- [9] L. Schmidt, S. Santurkar, D. Tsipras, K. Talwar, and A. Madry. Adversarially robust generalization requires more data. arxiv 1804.11285, 2018.

- [10] J. M. Cohen, E. Rosenfeld, and J. Z. Kolter. Certified adversarial robustness via randomized smoothing. arxiv 1902.02918, 2019.

- [11] L. Engstrom, A. Ilyas, S. Santurkar, D. Tsipras, B. Tran, and A. Madry. Adversarial robustness as a prior for learned representations. arxiv 1906.00945, 2019.