Score matching, diffusion models, and why noise became a generator

Key terms used in this post

- score

- the gradient of log density, $\nabla_x \log p(x)$, pointing toward regions of higher probability.

- forward diffusion

- a process that gradually adds noise to data until it becomes close to a simple prior.

- reverse process

- a learned denoising dynamics that transforms noise back into data.

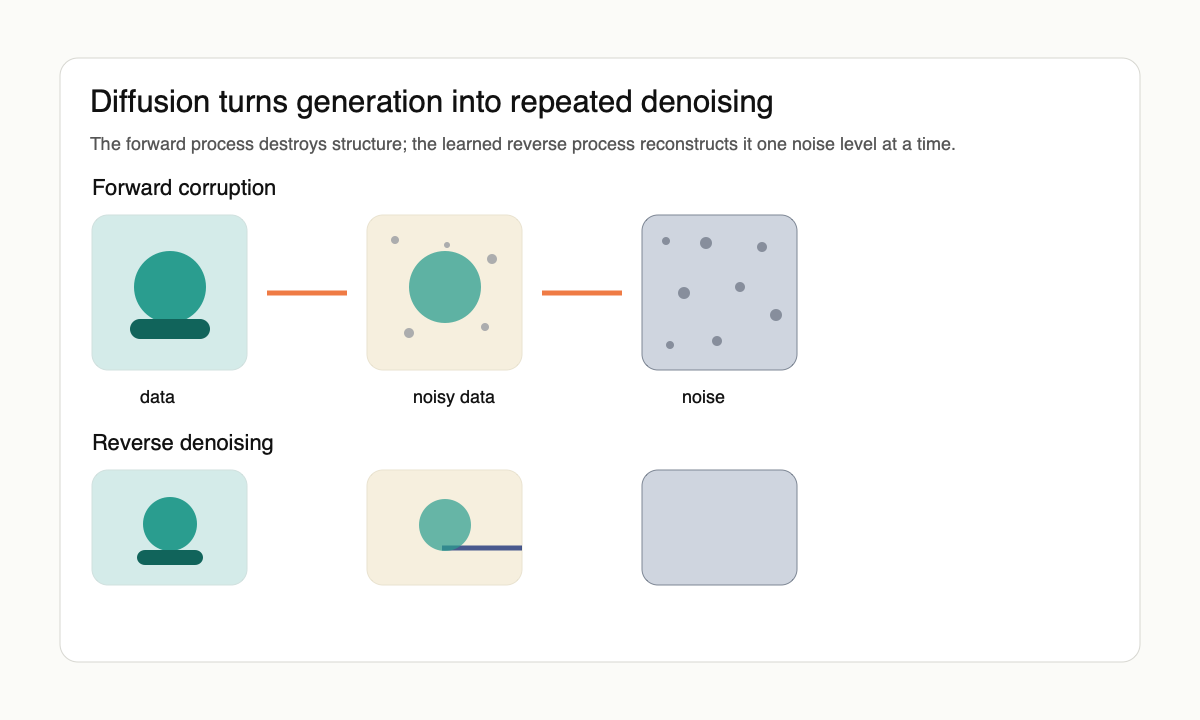

The surprising thing about diffusion models is not that they add noise. Adding noise is easy. The surprising thing is that adding noise in the right way turns generation into a sequence of supervised denoising problems. Sohl-Dickstein et al. [1] framed this as nonequilibrium thermodynamics: slowly destroy structure with a forward process, then learn a reverse process that reconstructs it.

At a high level, the forward chain starts with data $x_0$ and produces increasingly noisy variables $x_1, \ldots, x_T$ via $x_t = \sqrt{\bar\alpha_t}\, x_0 + \sqrt{1-\bar\alpha_t}\,\epsilon$ with $\epsilon \sim \mathcal{N}(0, I)$ and a noise schedule $\bar\alpha_t$ chosen so $x_T$ is approximately standard Gaussian. Sampling runs the chain backward. If the model can approximate $p(x_{t-1} \mid x_t)$ at each step, then starting from Gaussian noise and repeatedly denoising produces a sample from the data distribution.

The score-matching connection

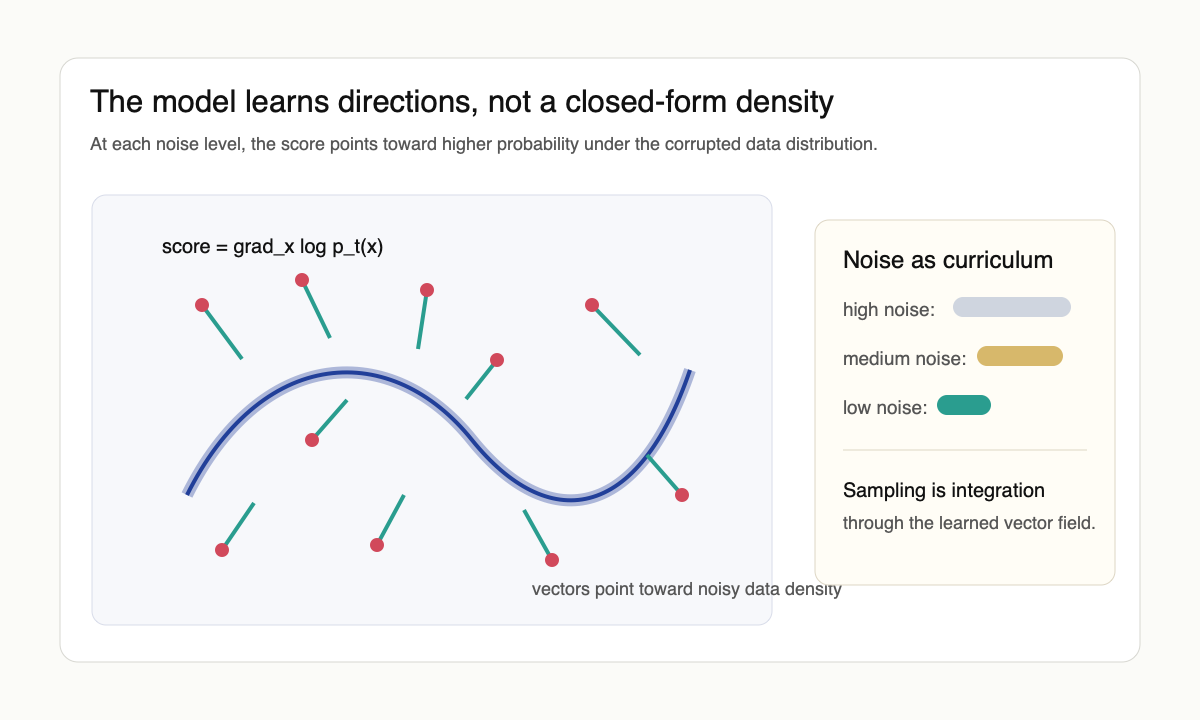

The bridge to modern diffusion models is score matching. The score $s(x, t) = \nabla_x \log p_t(x)$ points in the direction of higher density at noise level $t$. The original score-matching objective of Hyvärinen [6] avoids the unknown normalizing constant by penalizing $\tfrac{1}{2}\|s_\theta\|^2 + \mathrm{tr}(\nabla_x s_\theta)$, but the trace is expensive in high dimensions. Vincent's denoising score matching [7] gave the trick that made the modern approach tractable: with Gaussian noise of scale $\sigma$, the optimal denoiser $D^*(\tilde x) = \mathbb{E}[x \mid \tilde x]$ satisfies $\nabla_{\tilde x} \log p_\sigma(\tilde x) = (D^*(\tilde x) - \tilde x)/\sigma^2$. Predicting the noise (or equivalently the clean image) is therefore equivalent to estimating the score of the noised distribution, and the loss is just an MSE.

Song and Ermon [2] trained networks to estimate these scores across multiple noise scales and then used annealed Langevin dynamics to sample. Ho, Jain, and Abbeel [3] showed that DDPMs could be trained with a weighted variational bound that reduces, in the right limit, to denoising score matching at multiple noise levels with a particular reweighting. The conceptual compression is: predicting the noise added to an example is enough to learn the score of the noisy distribution. Once the model knows how to denoise at every noise level, sampling is just the repeated application of those local denoising fields.

The SDE view

Song et al. [4] unified the discrete and score-based pictures with stochastic differential equations. The forward process is an Itô SDE $dx_t = f(x_t, t)\,dt + g(t)\,dw_t$ that continuously turns data into noise. Anderson's reverse-time formula gives a backward SDE driven by the score, $dx_t = [f(x_t, t) - g(t)^2 \nabla_x \log p_t(x_t)]\,dt + g(t)\,d\bar w_t$. There is also a deterministic probability-flow ODE with the same marginal distributions, $dx_t = [f(x_t, t) - \tfrac{1}{2}g(t)^2 \nabla_x \log p_t(x_t)]\,dt$, useful both for likelihood evaluation and for fast sampling. This view made it clear that DDPMs, score-based models, and probability-flow ODE samplers were not separate inventions. They were different discretizations or parameterizations of the same underlying dynamics.

The SDE view also separated modeling from numerics. One can change the noise schedule, the solver, the parameterization, or the preconditioning without changing the basic score-learning problem. Lu et al.'s DPM-Solver [10] exploited the semi-linear structure of the probability-flow ODE to drop sampling from a thousand steps to ten or twenty without retraining. That separation is what made later engineering improvements legible.

For the friendly long-form versions, Lilian Weng's diffusion overview, Yang Song's score-based modeling post, and Sander Dieleman's perspectives on diffusion are better entry points than another derivation of the ELBO.

Why the design space mattered

Karras et al. [5] argued that diffusion practice had become unnecessarily tangled: sampling schedule, loss weighting, noise parameterization, network preconditioning, and solver choice were all mixed together. By separating those choices, they showed that much of the performance gain came not from a new generative principle but from cleaning up the design space. Their EDM recipe (continuous-time noise schedule, $\sigma$-conditioned preconditioning, second-order Heun solver) is now the practical default that subsequent papers either adopt or argue against.

This is a recurring pattern in deep learning. A theoretical object becomes practical only after the parameterization is made friendly to optimization. Dhariwal and Nichol [8] showed diffusion beating GANs on ImageNet by combining classifier guidance with architectural and training improvements. Ho and Salimans [9] then dropped the auxiliary classifier in favor of classifier-free guidance, which jointly trains conditional and unconditional scores and combines them at sampling time. Rombach et al. [11] moved the entire diffusion process into the latent space of a pretrained autoencoder; that single change is what made high-resolution diffusion practical at academic compute budgets and is the architectural backbone of Stable Diffusion. The score is the invariant idea. The noise schedule, preconditioning, solver, guidance, and latent space are the machinery that makes the idea work at scale.

What diffusion explained

Diffusion models made the manifold problem manageable. Natural data lives near a complicated low-dimensional set where densities are hard to model directly. By adding noise, we thicken the manifold into smoother distributions. At high noise levels the distribution is simple. At low noise levels it is detailed but local. The model never has to learn the entire data density in one shot; it learns many easier denoising problems.

They also gave generative modeling a stable supervised objective. GANs were fast but adversarial. Autoregressive models had tractable likelihoods but generated sequentially. Flows were exact but architecturally constrained. Diffusion models traded sampling speed for a forgiving training objective and excellent sample quality. Later samplers clawed back much of the speed.

What remains open

The theory still does not fully explain semantic abstraction. The score field tells us how to move from noisy samples toward data, but it does not by itself say why text prompts, guidance, latent spaces, or multimodal conditioning organize concepts the way they do. Classifier-free guidance, latent diffusion, and text conditioning sit on top of the score-matching core; none of them are forced by it.

The other open question, the one that became sharper over 2024–2025, is whether diffusion is the right parameterization at all. Flow matching [12] formulates generation as learning a vector field that transports a simple prior to the data distribution along straight or curved paths, with a regression objective that does not require an SDE. Rectified flow [13] specializes the path geometry to nearly straight trajectories so that few-step sampling becomes accurate. Consistency models [14] distill a multi-step diffusion sampler into a one-step generator while preserving sample quality. These are not competitors to score-based generative modeling so much as alternative parameterizations of the same vector-field-estimation problem. The honest reading is that the underlying generative principle is score (or velocity) estimation across noise levels, and the noise-schedule formalism is one historically convenient way to write it down.

The clean lesson is that generation can be learned by reversing corruption. Noise is not just damage. It is a coordinate system in which the data distribution can be approached gradually. That is why diffusion models felt strange at first and obvious afterward, and why the next generation of generative models will probably look like further refinements of the same coordinate change rather than a different idea.

References

- [1] J. Sohl-Dickstein, E. A. Weiss, N. Maheswaranathan, and S. Ganguli. Deep unsupervised learning using nonequilibrium thermodynamics. arxiv 1503.03585, 2015.

- [2] Y. Song and S. Ermon. Generative modeling by estimating gradients of the data distribution. arxiv 1907.05600, 2019.

- [3] J. Ho, A. Jain, and P. Abbeel. Denoising diffusion probabilistic models. arxiv 2006.11239, 2020.

- [4] Y. Song, J. Sohl-Dickstein, D. P. Kingma, A. Kumar, S. Ermon, and B. Poole. Score-based generative modeling through stochastic differential equations. arxiv 2011.13456, 2020.

- [5] T. Karras, M. Aittala, T. Aila, and S. Laine. Elucidating the design space of diffusion-based generative models. arxiv 2206.00364, 2022.

- [6] A. Hyvärinen. Estimation of non-normalized statistical models by score matching. Journal of Machine Learning Research, 6:695–709, 2005.

- [7] P. Vincent. A connection between score matching and denoising autoencoders. Neural Computation, 23(7):1661–1674, 2011.

- [8] P. Dhariwal and A. Nichol. Diffusion models beat GANs on image synthesis. arxiv 2105.05233, 2021.

- [9] J. Ho and T. Salimans. Classifier-free diffusion guidance. arxiv 2207.12598, 2022.

- [10] C. Lu, Y. Zhou, F. Bao, J. Chen, C. Li, and J. Zhu. DPM-Solver: a fast ODE solver for diffusion probabilistic model sampling in around 10 steps. arxiv 2206.00927, 2022.

- [11] R. Rombach, A. Blattmann, D. Lorenz, P. Esser, and B. Ommer. High-resolution image synthesis with latent diffusion models. arxiv 2112.10752, 2021.

- [12] Y. Lipman, R. T. Q. Chen, H. Ben-Hamu, M. Nickel, and M. Le. Flow matching for generative modeling. arxiv 2210.02747, 2022.

- [13] X. Liu, C. Gong, and Q. Liu. Flow straight and fast: learning to generate and transfer data with rectified flow. arxiv 2209.03003, 2022.

- [14] Y. Song, P. Dhariwal, M. Chen, and I. Sutskever. Consistency models. arxiv 2303.01469, 2023.