Implicit bias in gradient descent and the max-margin ghost

Key terms used in this post

- implicit bias

- the tendency of an optimization algorithm to select one solution among many even when no explicit regularizer appears in the objective.

- max-margin classifier

- the separator that maximizes the smallest normalized margin on the training set.

- optimization geometry

- the norm, potential, or coordinate system with respect to which an algorithm takes steepest descent steps.

One of the simplest puzzles in modern deep learning is that we often train models without an explicit regularizer, drive the training loss nearly to zero, and still get a solution that generalizes. The classical answer would be that the objective must contain a hidden penalty. The implicit-bias answer is subtler: even when the objective has many minimizers, the path taken by gradient descent is not neutral. The algorithm itself chooses a kind of solution.

Zhang et al. [2] sharpened the version of the puzzle that everyone now starts from. Standard image-classification networks can fit random labels just as well as real labels, which kills the simplest capacity-based explanation: the hypothesis class is large enough to memorize. Whatever does the work of generalization must therefore be something the optimizer (or the data, or the parameterization) brings to the table, not something the loss imposes.

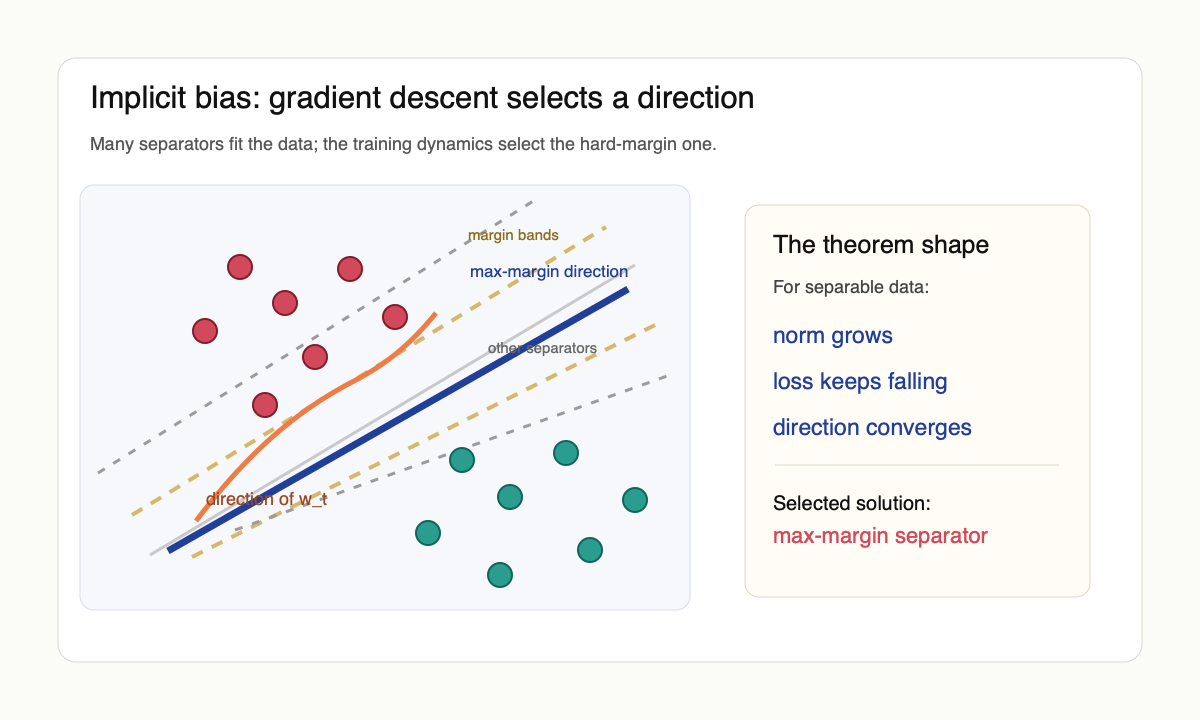

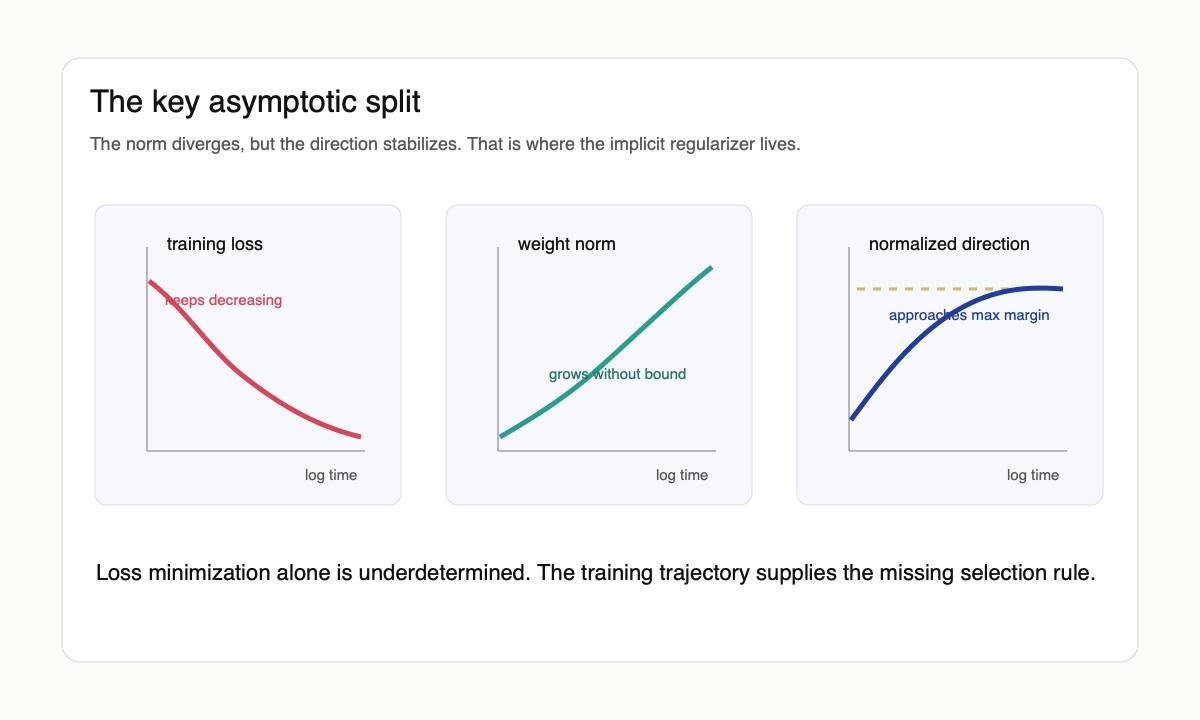

The cleanest version of this story is not about neural networks at all. It is about logistic regression on linearly separable data. Once the classifier separates the data, the empirical classification error is already zero, but the logistic loss keeps decreasing as the weight norm grows. There is no finite minimizer. The surprising fact proved by Soudry et al. [1] is that the direction of the weights still converges. It converges to the hard-margin support-vector-machine solution.

The linear theorem

For separable data $(x_i, y_i)$ with $y_i \in \{-1, 1\}$, unregularized gradient descent on losses with exponential tails sends $\|w_t\|$ to infinity while the normalized direction $w_t / \|w_t\|$ converges to the solution of

$$\min_w \|w\|_2^2 \quad \text{subject to} \quad y_i \langle w, x_i\rangle \ge 1.$$

That is already a strange theorem. The logistic objective never says "maximize the margin." The update rule says only "decrease the loss." Yet the asymptotic path decomposes as $w_t = \log(t)\,\hat{w}_{\mathrm{SVM}} + r_t$ with $\|r_t\| = O(\log\log t)$, where $\hat{w}_{\mathrm{SVM}}$ is the unit-norm hard-margin direction. The exponential tail of the loss does the work: once the classifier separates, the gradient is dominated by the support vectors, and the direction stabilizes while the magnitude grows logarithmically because halving the loss costs a multiplicative time factor. Gradient descent therefore behaves as if it had been regularized toward the Euclidean max-margin classifier even though no regularizer was written down.

Ji and Telgarsky [5] sharpened the rate and removed assumptions on the data, and Nacson et al. [6] showed that aggressive learning-rate schedules can speed convergence to the max-margin direction by polynomial factors over plain GD. The geometry survived; only the constants moved.

This is why the result became central to the deep-learning conversation. It gave a concrete mechanism by which overparameterized training can be underdetermined as an optimization problem but determined as an algorithmic process. If there are infinitely many interpolating solutions, the question is not only what the loss permits. It is what the optimizer can reach.

Where the geometry enters

Gunasekar et al. [3] made the point sharper. Different optimization geometries imply different implicit biases. Steepest descent with respect to the $\ell_p$ norm sends $w_t / \|w_t\|_p$ to the $\ell_p$-margin maximizer rather than the $\ell_2$ one. Mirror descent with potential $\Phi$ sends the iterate to the $\Phi$-min-norm interpolant. Natural-gradient and adaptive-method updates fit the same template: the geometry of the step is the geometry of the implicit penalty.

The linear convolutional-network result [4] is the useful cautionary example. For fully connected linear predictors, gradient descent recovers the familiar margin geometry. For full-width linear convolutional networks of depth $L$, the selected predictor is tied to a $2/L$-bridge penalty in the discrete Fourier domain. The architecture changes the parameterization; the parameterization changes the path; the path changes the implicit regularizer. "Gradient descent likes simple solutions" is too vague to be a theorem. Simple in which coordinates?

Margin in homogeneous networks

Lyu and Li [7] extended the margin story past the linear case. If $f_\theta$ is positive-homogeneous of degree $L$ in $\theta$ (which holds for ReLU networks without bias, with $L$ equal to the depth), gradient flow on the exponential or logistic loss drives the rescaled iterate $\theta_t / \|\theta_t\|$ to a Karush–Kuhn–Tucker point of the parameter-space margin program $\max_{\|\theta\| \le 1} \min_i y_i f_\theta(x_i)$. The norm still diverges and the direction still stabilizes; only the geometry of the program is now nonconvex.

Chizat and Bach [8] proved the corresponding mean-field statement for two-layer networks at vanishing initialization. The implicit bias is now $F_1$-norm minimization in function space, which is genuinely a different object from parameter-space margin and which interacts very differently with the data. The takeaway is uncomfortable: linear, homogeneous-parametric, and mean-field stories all give clean theorems, and the theorems agree on the linear case but disagree about how to extrapolate. The right limit to take is not obvious from the experiment.

What stochasticity changes

The Soudry result is for full-batch gradient descent on the logistic loss. SGD, label noise, and adaptive methods carry their own biases that are not always the same. Pesme, Pillaud-Vivien, and Flammarion [9] show that for diagonal linear networks, SGD with label noise effectively penalizes high-variance directions and converges to an $\ell_1$-style sparse predictor rather than the $\ell_2$ max-margin one. Moroshko et al. [10] characterize weight normalization, where the rescaling by $\|\theta\|$ inserts a different geometry into the same flow. Adam, RMSProp, and SAM each shift the picture again. The lesson is not that the linear theorem is wrong; the lesson is that the bias is supplied jointly by loss, parameterization, optimizer, and noise, and dropping any one of those modifies the answer.

For the surrounding argument, OffConvex is useful because it treats optimizers as dynamical systems rather than mere minimizers, and the entries on implicit regularization and on the geometry of margin are the right next read. Ben Recht's Arg Min is the skeptical companion when the story becomes too neat. Suriya Gunasekar's page aggregates the technical sequence in one place.

View the implicit-regularization discussion on X

The leap to deep networks

The linear theorem is beautiful partly because it is narrow. It assumes separability, a homogeneous linear predictor, and a loss whose infimum is reached only at infinity. Deep networks add nonconvexity, feature learning, scale symmetries, normalization layers, stochastic gradients, adaptive optimizers, and learning-rate schedules. The theorem does not automatically survive that list.

Still, the theorem changed the default question. Earlier generalization arguments often tried to find an explicit capacity measure after training: norm bounds, flatness, compression, PAC-Bayes certificates. The implicit-bias view asks instead what trajectory produced the weights. Two networks can represent the same function, or two functions can achieve the same training loss, while the optimizer strongly prefers one over the other. That preference is part of the statistical model.

For homogeneous networks, one recurring theme is margin growth in function space while parameter norms keep expanding along scale-invariant directions. For adaptive optimizers, another theme is that the geometry is no longer Euclidean, so one should not expect the same max-margin solution. For batch normalization and weight normalization, even the meaning of moving "far" in parameter space changes. This is not a small technicality; it is the entire question.

What survived

The durable lesson is not that deep networks are secretly SVMs. They are not. The durable lesson is that zero training loss does not make all interpolating solutions equally plausible. The optimizer carries information. Initialization carries information. The schedule carries information. In overparameterized systems, those choices are not implementation details; they are part of the inductive bias.

This also reframes the flat-minima and edge-of-stability stories. Flatness asks what kind of local geometry the solution has. Edge of stability asks what kind of sharpness the training dynamics can tolerate. Implicit bias asks why this solution was selected at all. These are three different projections of the same object: the training trajectory.

The gap is that we still lack a theorem of the Soudry type for realistic deep networks. We have exact answers for stylized models and suggestive measurements for real ones. That is not a failure. It is a map of where the theory is honest.

My own reading is that the implicit-bias program is the cleanest example of a research direction being vindicated and outgrown at the same time. Soudry et al. is true; the mechanism is real; the linear case is the only setting where I can prove anything I trust. What is unclear is whether the same phenomenon is the dominant explanation for why large feature-learning networks generalize, or whether at scale the data distribution and the architecture have already done so much of the work that the optimizer’s preference is a small correction. I currently believe the second, but I do not have a falsifier I trust, which is exactly the position the field is in.

References

- [1] D. Soudry, E. Hoffer, M. S. Nacson, S. Gunasekar, and N. Srebro. The implicit bias of gradient descent on separable data. arxiv 1710.10345, 2017.

- [2] C. Zhang, S. Bengio, M. Hardt, B. Recht, and O. Vinyals. Understanding deep learning requires rethinking generalization. arxiv 1611.03530, 2016.

- [3] S. Gunasekar, J. Lee, D. Soudry, and N. Srebro. Characterizing implicit bias in terms of optimization geometry. arxiv 1802.08246, 2018.

- [4] S. Gunasekar, J. Lee, D. Soudry, and N. Srebro. Implicit bias of gradient descent on linear convolutional networks. arxiv 1806.00468, 2018.

- [5] Z. Ji and M. Telgarsky. Risk and parameter convergence of logistic regression. arxiv 1810.02032, 2018.

- [6] M. S. Nacson, J. Lee, S. Gunasekar, P. H. P. Savarese, N. Srebro, and D. Soudry. Convergence of gradient descent on separable data. arxiv 1905.07325, 2019.

- [7] K. Lyu and J. Li. Gradient descent maximizes the margin of homogeneous neural networks. arxiv 1906.05890, 2019.

- [8] L. Chizat and F. Bach. Implicit bias of gradient descent for wide two-layer neural networks trained with the logistic loss. arxiv 2002.04486, 2020.

- [9] S. Pesme, L. Pillaud-Vivien, and N. Flammarion. Implicit bias of SGD for diagonal linear networks: a provable benefit of stochasticity. arxiv 2106.09524, 2021.

- [10] E. Moroshko, S. Gunasekar, B. Woodworth, J. D. Lee, N. Srebro, and D. Soudry. Implicit bias in deep linear classification: initialization scale vs training accuracy. arxiv 2007.06738, 2020.