Mode connectivity and the case for one wide basin

Key terms used in this post

- mode connectivity

- the observation that separately trained neural-network solutions can often be connected by paths with little or no loss barrier.

- linear mode connectivity

- the stronger property that the straight line between two solutions has low loss, often after alignment or shared training history.

- permutation symmetry

- the fact that hidden units can often be reordered without changing the function represented by the network.

The old picture of a neural-network loss landscape was a field of isolated valleys: many different minima, separated by high barriers, each found by a different random seed. Freeman and Bruna [6] argued early that, for sufficiently overparameterized rectified networks, the level sets of the loss are connected. That was a theoretical hint. Mode connectivity made it an experimental fact. Garipov et al. [1] and Draxler et al. [2] showed that independently trained networks can often be connected by simple low-loss curves. The minima are not isolated points in separate valleys. They are more like entrances to a large connected region.

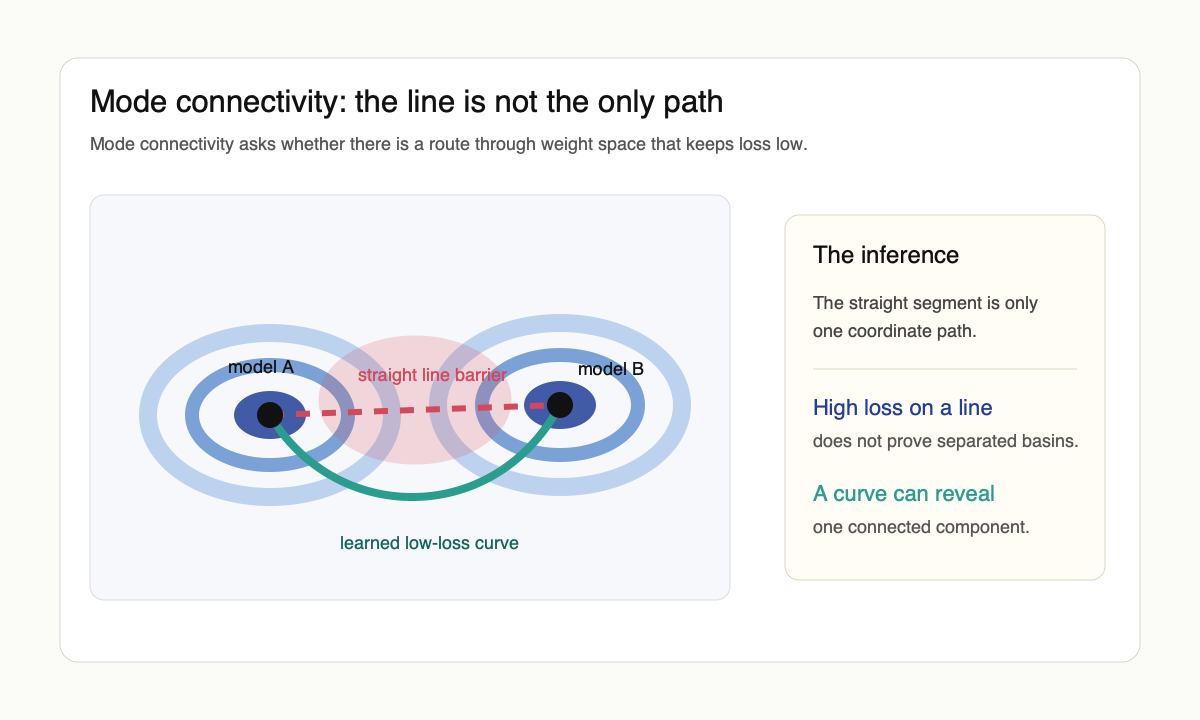

The basic experiment is almost embarrassingly direct. Train two networks $\theta_1$ and $\theta_2$ to low loss. Interpolate $\theta(\alpha) = (1-\alpha)\theta_1 + \alpha\theta_2$ for $\alpha \in [0,1]$. The straight line usually goes through a high-loss barrier $B = \max_\alpha L(\theta(\alpha)) - \tfrac{1}{2}(L(\theta_1)+L(\theta_2))$ that is much larger than the endpoints. But if one parameterizes a curve $\gamma: [0,1] \to \Theta$ with $\gamma(0)=\theta_1$, $\gamma(1)=\theta_2$ and minimizes $\int_0^1 L(\gamma(t))\,dt$, the barrier can nearly vanish. The endpoints are not separated by an unavoidable mountain; the straight line was simply the wrong path through parameter space.

Curves first, lines later

The first mode-connectivity papers found nonlinear paths. Garipov et al. used polygonal chains and Bézier curves; Draxler et al. used continuous paths in the loss landscape via Nudged Elastic Band. Both results weakened the intuition that SGD lands in sharply separated basins. If a low-loss path exists between two solutions, then the relevant connected component of good solutions is much larger than local Hessian pictures suggest.

Linear mode connectivity is the stronger property: $L((1-\alpha)\theta_1 + \alpha\theta_2)$ stays low along the entire segment. That usually fails for independently trained networks from unrelated initializations but often holds inside one of three regimes. Frankle, Dziugaite, Roy, and Carbin [3] formulated the spawning version: train one network, fork two copies after $k$ steps with different SGD noise, train each to convergence. For $k$ past a small threshold (often within the first epoch on standard image tasks), the two endpoints are linearly connected. They tied this directly to the lottery-ticket story: the linearly connected basin is the basin the matching subnetwork can reach. This made linear mode connectivity a practical diagnostic of whether two runs are in the same effective basin, not just a curiosity about loss surfaces.

The permutation turn

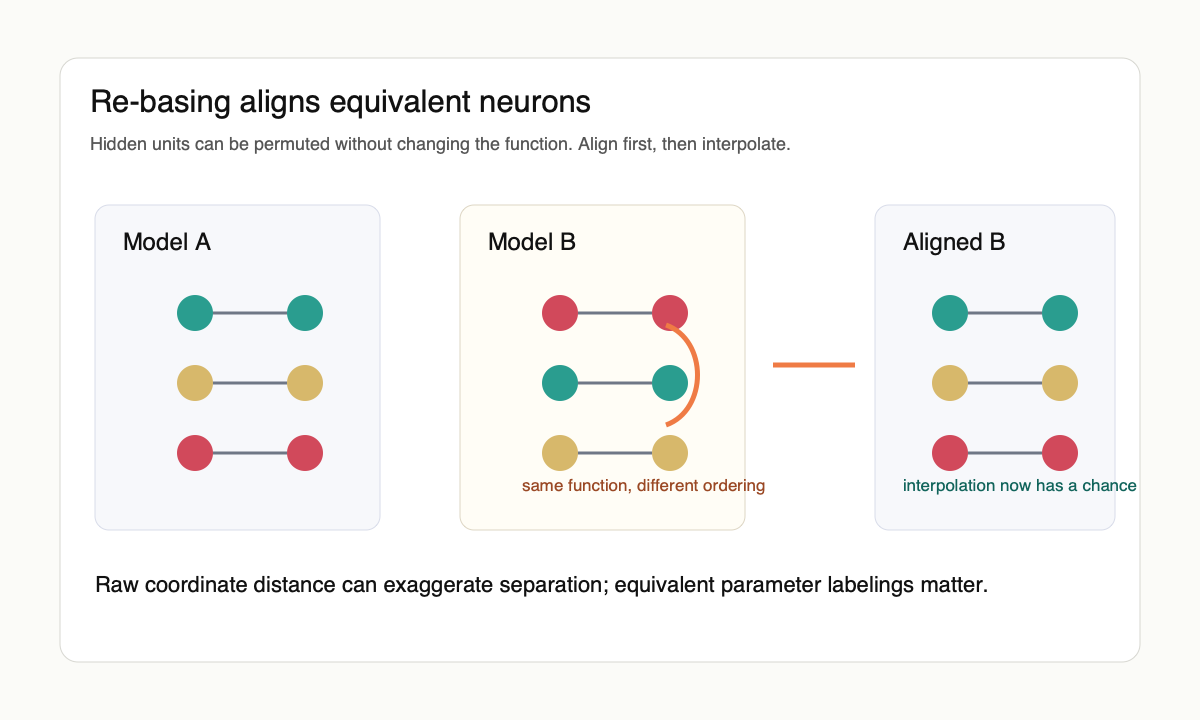

Entezari et al. [4] proposed the cleanest reframing. Neural networks have permutation symmetries: swapping hidden units in a layer and undoing that swap in the next layer can leave the represented function unchanged. Two networks that look far apart in raw parameter coordinates may simply be using different neuron orderings. If one accounts for those symmetries, perhaps many independently trained solutions are linearly connected after all.

Git Re-Basin [5] turned that idea into algorithms. Given two trained networks $\theta_1, \theta_2$ with hidden widths $\{n_\ell\}$, it searches over permutation matrices $P_\ell \in S_{n_\ell}$ to find an alignment that minimizes $\|\theta_1 - P \cdot \theta_2\|$ in some appropriate weight or activation metric, then checks whether the aligned models can be merged in weight space. Singh and Jaggi [7] gave the optimal-transport version of the same construction: a soft assignment between neurons that recovers permutation matching as a special case and lets one fuse models with mismatched widths. The result is not a theorem that all networks occupy one basin, but it is strong evidence that raw parameter interpolation exaggerates separation. Some of the apparent barrier is coordinate mismatch.

Tatro et al. [8] verified empirically that aligning networks before constructing connecting curves makes the curves much shorter and the loss along them much lower, which is the right kind of consistency check: the symmetry-corrected geometry is simpler than the raw geometry. Benton et al. [9] pushed past curves to higher-dimensional simplexes of solutions, finding low-loss volumes that contain many independently trained checkpoints once symmetries are handled.

The practical descendant is model merging. Stochastic weight averaging [10] keeps a running mean of late-training checkpoints; it works because the trajectory stays in a connected low-loss region. Model soups [11] average independently fine-tuned models from a shared pretrained initialization; that shared initialization is what places the fine-tunes in the same connected component. Git Re-Basin's code applies permutation alignment to make merging work even when initializations are not shared. The pattern is consistent: weight space is less like a vault and more like a workspace, but only after symmetries and shared training history are accounted for.

What this explains

Mode connectivity explains why averaging, ensembling, and model merging sometimes work better than the naive nonconvex picture predicts. If good models lie in a broad connected region, then moving between them need not destroy the function. This is also why stochastic weight averaging and model soups are less mysterious than they first appear. They rely on the existence of useful low-loss regions between trained checkpoints or fine-tuned models.

It also changes how one should think about local minima. A low Hessian eigenvalue is not just noise in the measurement. It may reflect a real path along which the network can change its parameters without changing its function much. In an overparameterized model, many parameter directions are functionally redundant, and some are redundant only after a nontrivial rearrangement of units.

What it does not explain

Connectivity is not generalization. A connected set of low-training-loss solutions can still contain bad predictors. The mode-connectivity result tells us about the geometry of solutions the optimizer reaches; it does not by itself say why those solutions match the test distribution. It also does not say that every architecture, dataset, or training recipe has one basin. The strongest single-basin claims still have counterexamples, and the details matter.

The best interpretation is therefore geometric humility. The loss landscape is nonconvex, but not in the cartoon way. Barriers can be artifacts of coordinates. Minima can be connected by paths that a two-dimensional plot would miss. The optimizer may not be searching for a needle in a field of isolated valleys. It may be entering a connected high-dimensional corridor that looks fragmented only when projected badly.

The strong "one wide basin" claim is rhetorically attractive but architecturally fragile, and I think it overreaches the evidence. What survives across architectures is the weaker claim that the set of solutions reachable by a fixed initialization (or by aligned independent runs) has positive connected measure under the relevant permutation symmetries. That suffices to explain ensembling, soups, and SWA without committing to a single global basin. The Off-Convex overview of mode connectivity and the Git Re-Basin repository are the most useful entry points if the goal is intuition first and theorems second.

References

- [1] T. Garipov, P. Izmailov, D. Podoprikhin, D. Vetrov, and A. G. Wilson. Loss surfaces, mode connectivity, and fast ensembling of DNNs. arxiv 1802.10026, 2018.

- [2] F. Draxler, K. Veschgini, M. Salmhofer, and F. A. Hamprecht. Essentially no barriers in neural network energy landscape. arxiv 1803.00885, 2018.

- [3] J. Frankle, G. K. Dziugaite, D. M. Roy, and M. Carbin. Linear mode connectivity and the lottery ticket hypothesis. arxiv 1912.05671, 2019.

- [4] R. Entezari, H. Sedghi, O. Saukh, and B. Neyshabur. The role of permutation invariance in linear mode connectivity of neural networks. arxiv 2110.06296, 2021.

- [5] S. K. Ainsworth, J. Hayase, and S. Srinivasa. Git Re-Basin: merging models modulo permutation symmetries. arxiv 2209.04836, 2022.

- [6] C. D. Freeman and J. Bruna. Topology and geometry of half-rectified network optimization. arxiv 1611.01540, 2016.

- [7] S. P. Singh and M. Jaggi. Model fusion via optimal transport. arxiv 2002.07683, 2020.

- [8] N. Tatro, P.-Y. Chen, P. Das, I. Melnyk, P. Sattigeri, and R. Lai. Optimizing mode connectivity via neuron alignment. arxiv 2009.02439, 2020.

- [9] G. Benton, W. J. Maddox, S. Lotfi, and A. G. Wilson. Loss surface simplexes for mode connecting volumes and fast ensembling. arxiv 2102.13042, 2021.

- [10] P. Izmailov, D. Podoprikhin, T. Garipov, D. Vetrov, and A. G. Wilson. Averaging weights leads to wider optima and better generalization. arxiv 1803.05407, 2018.

- [11] M. Wortsman et al. Model soups: averaging weights of multiple fine-tuned models improves accuracy without increasing inference time. arxiv 2203.05482, 2022.