The neural tangent kernel and the cost of freezing features

Key terms used in this post

- neural tangent kernel

- the kernel $K_\theta(x, x') = \nabla_\theta f_\theta(x)^\top \nabla_\theta f_\theta(x')$ describing first-order changes in the network function.

- lazy training

- a regime where parameters move so little that the network behaves like its linearization around initialization.

- feature learning

- the regime where internal representations change enough during training to alter the effective predictor.

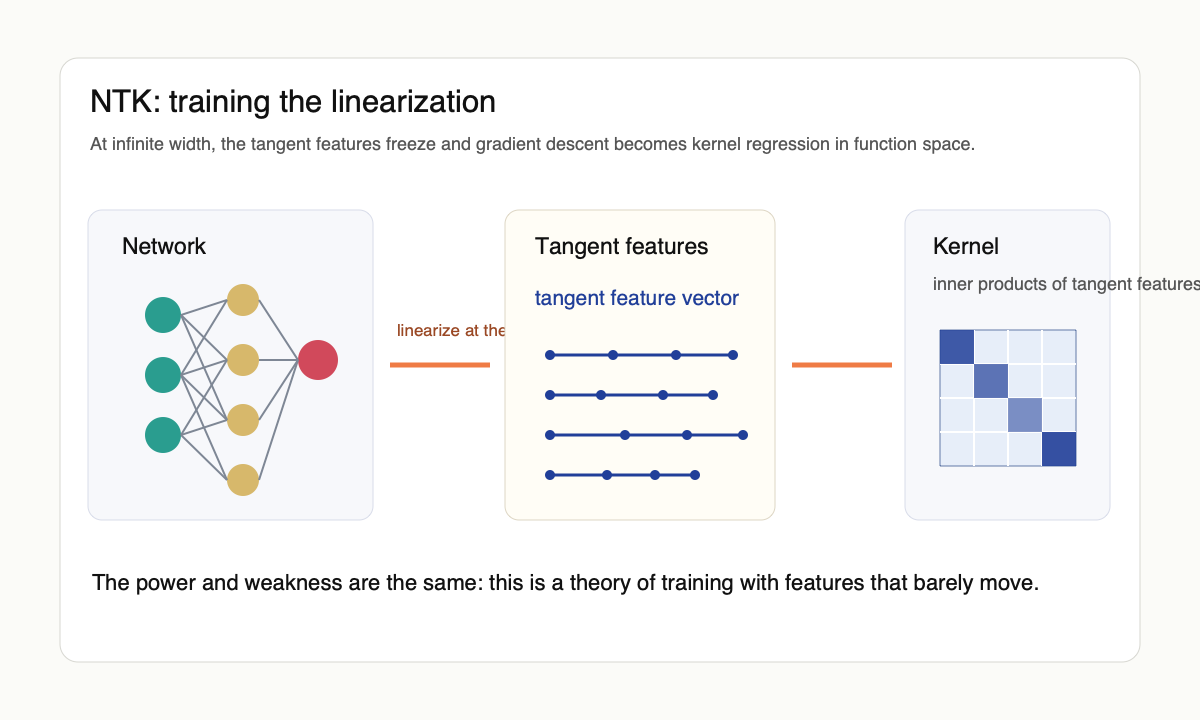

The neural tangent kernel was one of the rare deep-learning theory ideas that became useful almost immediately. It did not solve generalization, but it made a stubborn object analyzable. Jacot, Gabriel, and Hongler [1] observed that if a network is taken to infinite width under the right scaling, gradient descent on parameters becomes kernel gradient descent in function space.

The kernel is not chosen by hand. It is induced by the network at initialization. For a model $f_\theta$, the tangent kernel is

$$K_\theta(x, x') = \nabla_\theta f_\theta(x)^\top \nabla_\theta f_\theta(x').$$

In finite networks this kernel changes during training. In the infinite-width limit under the standard parameterization, it converges to a deterministic kernel $K_\infty$ and the training-time deviation $\|K_{\theta_t} - K_\infty\|$ is $O(1/\sqrt{n})$ in the width $n$. Function-space gradient descent then satisfies $\dot{f}_t = -K_\infty (f_t - y)$ on the training set, which integrates to $f_t = y + e^{-K_\infty t}(f_0 - y)$. The parameter-space loss can stay nonconvex; the function-space dynamics are linear and governed by a positive semidefinite kernel.

What the NTK explained

The first thing the NTK explained was why very wide networks can optimize so easily. If the kernel is well conditioned on the training data, gradient descent has a direct route to interpolation. The complicated nonconvex path becomes, at leading order, kernel regression with a specific architecture-induced kernel. Lee et al. [2] pushed this picture further by showing that wide networks of any depth evolve like their first-order Taylor expansion around initialization. Du et al. [4] used the same machinery to give a clean proof that overparameterized networks reach zero training loss with a polynomial-width requirement and a global-convergence guarantee that the nonconvex landscape did not previously enjoy.

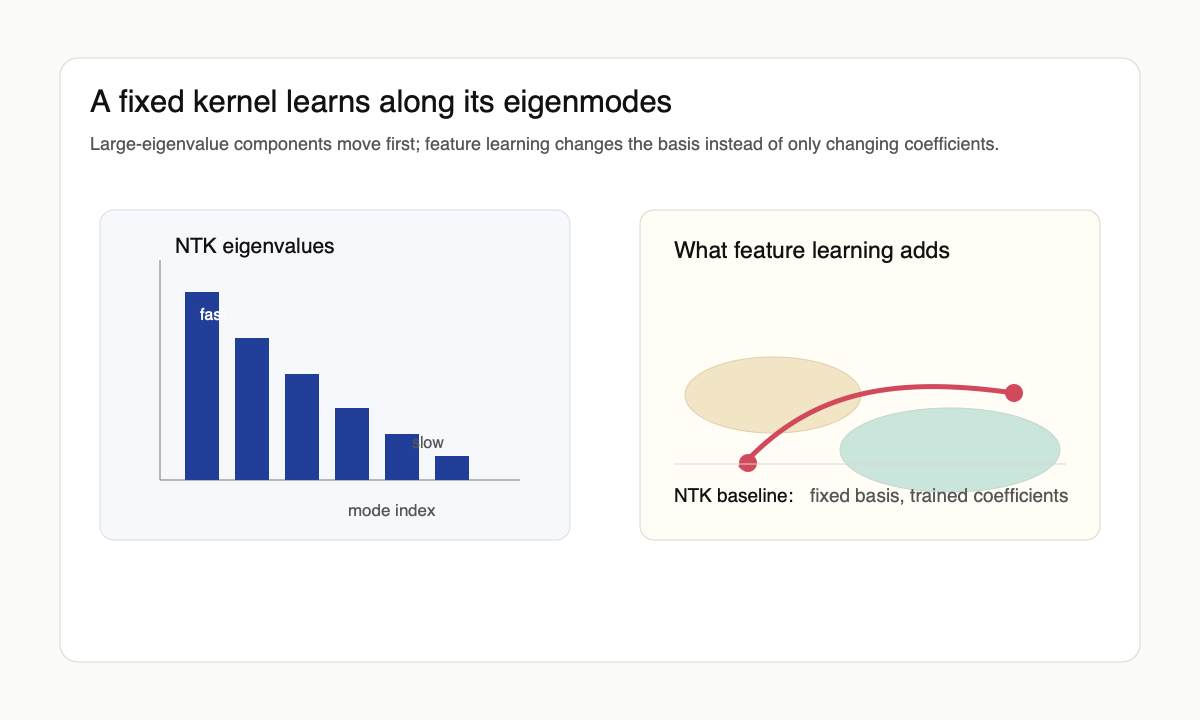

The spectrum of $K_\infty$ controls more than convergence speed. The eigendecomposition $K_\infty = \sum_k \lambda_k \phi_k \phi_k^\top$ implies that the residual along eigenmode $\phi_k$ shrinks like $e^{-\lambda_k t}$. Large-eigenvalue modes are learned quickly; small-eigenvalue modes are learned slowly or, with early stopping, not at all. Early stopping, frequency principle observations, and spectral biases of MLPs all become statements about $\{\lambda_k\}$. Arora et al. [5] wrote down an exact algorithm for computing $K_\infty$ for fully connected and convolutional networks of arbitrary depth, which made these spectral predictions empirically testable on real datasets.

The lazy-training caveat

The problem is that the same condition that makes the theory clean also removes one of the main things deep networks seem to do. In the NTK limit, features do not really move. Concretely, the parameter movement is $\|\theta_t - \theta_0\| = O(1/\sqrt{n})$ in width $n$, while the function changes by $O(1)$. The network changes its output by adjusting an almost fixed collection of random features. Chizat, Oyallon, and Bach [3] named this the lazy-training regime and emphasized that it is a scaling phenomenon, not a universal description of neural networks.

That caveat matters. A convolutional network trained in the lazy regime can optimize while failing to learn the representations that make convolutional networks useful. A transformer that behaves like a fixed random feature model is not the thing people mean when they talk about in-context learning, induction heads, or abstraction. The NTK gives a rigorous theory of one limit. The question is whether that limit keeps the right phenomena.

Geiger et al. [7] documented a sharp empirical separation: at moderate width and standard initialization scale, networks operate near the lazy regime; at lower initialization scale (or under explicit feature-learning parameterizations), the same architecture moves to a regime where features evolve and test error improves. The transition is controlled by initialization scale and width, not by anything intrinsic to the architecture, which is a slightly disappointing answer if we wanted neural networks to be feature learners by default.

Why it still matters

The right conclusion is not that the NTK is wrong. The right conclusion is that it is a baseline. If a phenomenon appears in the NTK limit, then it may be explained by width, interpolation, and fixed features. If it disappears in the NTK limit, then some form of representation learning, finite-width fluctuation, architecture-specific structure, or optimization nonlinearity is doing the work.

This makes the NTK a useful negative control for theory. Claims about deep learning often smuggle in feature learning without saying so. The NTK asks: would the claim still be true if the features froze? For some optimization claims, yes. For many generalization and capability claims, no.

For a less theorem-shaped way into the contrast, the Distill circuits thread is a useful reminder of what feature learning looks like when someone actually opens the model up. Greg Yang's $\mu$P writeup is the right operational entry into Tensor Programs, and the microsoft/mup repository is what to use if the goal is hyperparameter transfer rather than theory. The Fort discussion below is the social-media version of the same pressure: kernel learning is a baseline, not the whole story.

View the kernel-learning versus feature-learning discussion on X

Feature learning is the missing term

The frontier after the NTK was to build limits that allow features to move. Mean-field limits [8] treat each unit as a particle in a measure and study the gradient flow on that measure; in this scaling, features evolve and the kernel is not constant. Tensor-program analyses [9] catalog the parameterizations that yield well-defined infinite-width limits; the maximal-update parameterization $\mu$P is the one that keeps both feature learning and stable optimization in the limit. Yang and Hu [9] derive practical hyperparameter-transfer rules from this analysis: tune at small width, scale to large width, get the right learning-rate schedule for free.

It is helpful to say this without mysticism. Feature learning means the tangent kernel changes in a useful way: $K_{\theta_t} - K_{\theta_0}$ is not a small perturbation but a structured rotation of the tangent feature basis toward the data. The gradients with respect to parameters at the end of training are not the gradients at initialization. The model has changed the basis in which it solves the task. That change is exactly what the pure NTK limit suppresses.

Fort et al. [10] gave one of the cleanest empirical comparisons: kernel learning matches a finite network early in training but the two diverge later, and the divergence is the gap between lazy convergence to a fixed kernel and feature-driven re-shaping of the kernel. The story is not that deep learning is all kernel learning, nor that none of it is; it is that the kernel piece is real, and the part that is not kernel is what makes a network deep.

What survived

The NTK survives as a theory of optimization and as a diagnostic for what a proposed explanation uses. It does not survive as a complete theory of deep learning. The infinite-width limit is too stable, too linear, and too close to a kernel method to explain the most interesting representation-learning phenomena by itself.

But that is not a small contribution. A good theory does not have to explain everything. Sometimes its value is in drawing the boundary of what can be explained without feature learning. The NTK drew that boundary sharply enough that the rest of the field had to decide which side of it their explanations lived on.

My personal use of the NTK is as a falsifier rather than a model. If a proposed mechanism for a deep-learning phenomenon already shows up in the NTK regime, then "the network is wide and the features are random" suffices and the explanation has not earned the depth of its hypothesis. The interesting predictions are the ones that disagree with the kernel: they are where width, lazy initialization, and architecture-induced spectra are not enough, and where representation change must be doing the work. That includes most of in-context learning, induction-head formation, and the parts of scaling laws that depend on where compute is spent rather than how much there is.

References

- [1] A. Jacot, F. Gabriel, and C. Hongler. Neural tangent kernel: convergence and generalization in neural networks. arxiv 1806.07572, 2018.

- [2] J. Lee, L. Xiao, S. S. Schoenholz, Y. Bahri, R. Novak, J. Sohl-Dickstein, and J. Pennington. Wide neural networks of any depth evolve as linear models under gradient descent. arxiv 1902.06720, 2019.

- [3] L. Chizat, E. Oyallon, and F. Bach. On lazy training in differentiable programming. arxiv 1812.07956, 2018.

- [4] S. S. Du, X. Zhai, B. Póczos, and A. Singh. Gradient descent provably optimizes over-parameterized neural networks. arxiv 1810.02054, 2018.

- [5] S. Arora, S. S. Du, W. Hu, Z. Li, R. Salakhutdinov, and R. Wang. On exact computation with an infinitely wide neural net. arxiv 1904.11955, 2019.

- [6] M. Belkin, D. Hsu, S. Ma, and S. Mandal. Reconciling modern machine learning practice and the bias-variance trade-off. arxiv 1812.11118, 2018.

- [7] M. Geiger, S. Spigler, A. Jacot, and M. Wyart. Disentangling feature and lazy training in deep neural networks. arxiv 1906.08034, 2019.

- [8] S. Mei, A. Montanari, and P.-M. Nguyen. A mean field view of the landscape of two-layer neural networks. arxiv 1804.06561, 2018.

- [9] G. Yang and E. J. Hu. Tensor Programs IV: feature learning in infinite-width neural networks. arxiv 2011.14522, 2020.

- [10] S. Fort, G. K. Dziugaite, M. Paul, S. Kharaghani, D. M. Roy, and S. Ganguli. Deep learning versus kernel learning: an empirical study of loss landscape geometry and the time evolution of the neural tangent kernel. arxiv 2010.15110, 2020.